The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Back to feedPost

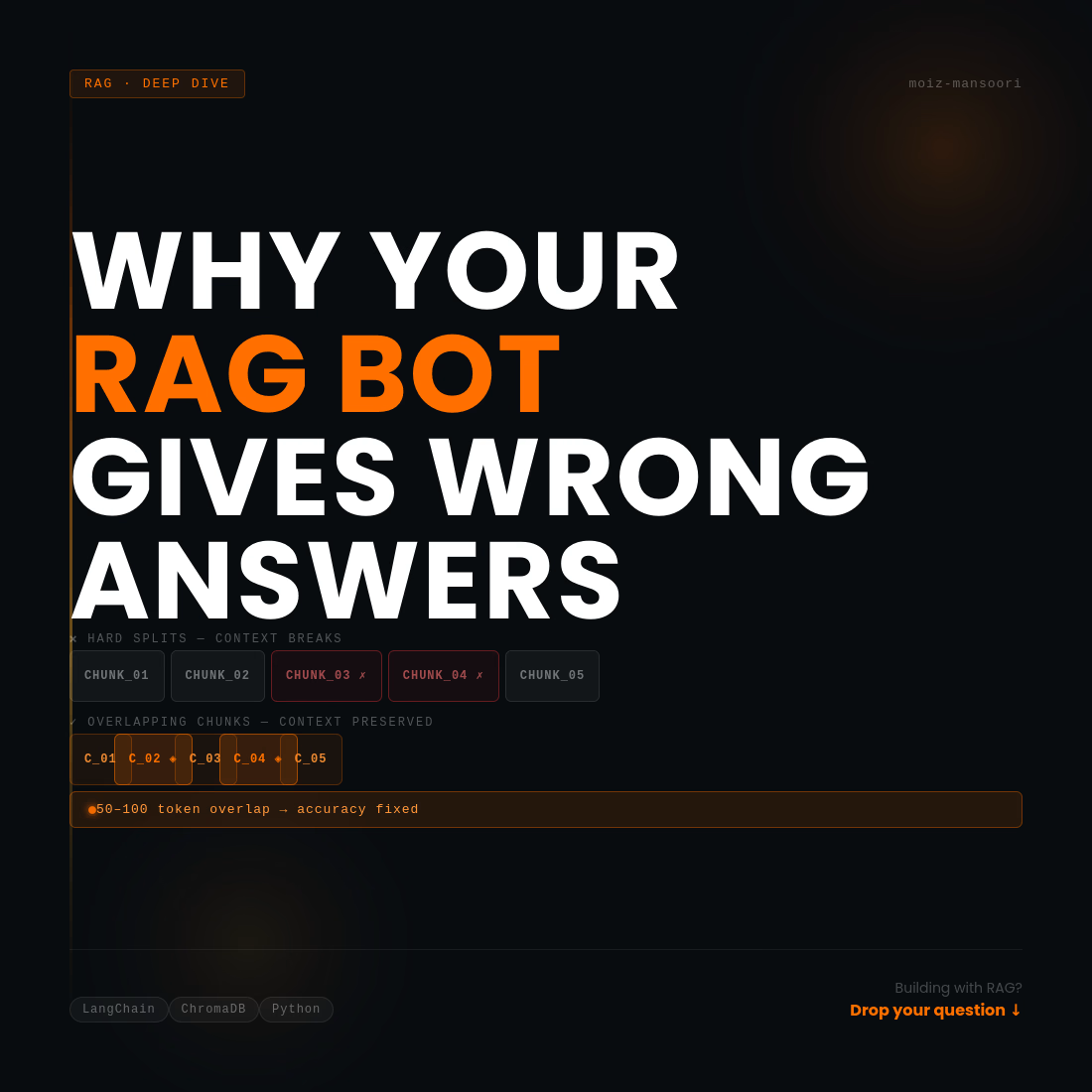

Most RAG chatbots give wrong answers. Here's why 👇

The problem isn't the LLM.

It's how documents are chunked.

Most developers split text like this:

Every 500 tokens → new chunk. Done.

The result?

One sentence is in chunk 3.

The next related sentence is in chunk 4.

The chatbot retrieves only one — and gives a half-answer.

The fix is simple: overlap your chunks.

Instead of hard splits, let each chunk share 50-100 tokens with the next one. Context stays connected. Answers get accurate.

I ran into this exact issue while building a RAG system for document Q&A. Switched to overlapping chunks — accuracy improved immediately.

Small change. Big difference.

Building something with RAG or AI Development? Drop your question below 👇

The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Related posts

THE LOOP — Cinematic Short Film

A psychological short film exploring repetition and reality, created entirely inside CapCut Video Studio using AI storytelling, editing, sound design, and cinematic color grading.

Open to cinematic editing and storytelling projects.

Orion doesn't just answer it listens, analyzes, and learns your patterns. Drop a doc, a voice note, a thought. It processes everything and talks back like it actually gets you.

Not a chatbot. Not an assistant. A thinking layer that lives on your phone.

The future of AI isn't smarter answers it's deeper understanding.

M01LAB × Orion

🌿 Just shipped: ECOAPE — Where Crypto Meets Real Agriculture

Another project is off the board, and this one sits at a really exciting intersection: DeFi meets sustainable eco-farming.

ECOAPE is a full Web3 platform built around an eco-farming-backed cryptocurrency ecosystem. The project required designing a digital experience that communicates trust, innovation, and purpose to a crypto-native audience while making complex concepts such as staking, token ecosystems, and sustainable agriculture feel accessible and compelling.

What went into this build:

End-to-end UI/UX design tailored for a Web3/DeFi audience

Visual identity and brand language that bridges the worlds of nature and blockchain

Clear, conversion-focused layout architecture — from hero messaging to staking CTAs

Responsive design optimized for desktop and mobile users

Motion and interaction design to reinforce the premium, trustworthy feel the brand needed

The challenge: crypto projects live or die by first impressions. Visitors decide in seconds whether to trust a platform with their assets. The design had to communicate legitimacy, vision, and community fast.

The result: a clean, immersive Web3 experience that positions ECOAPE as a serious player in the sustainable DeFi space.

Currently open to projects in:

🌿 Web3, DeFi & crypto platforms

🏢 Company & organizational websites

🎮 Gaming & entertainment platforms

📱 SaaS & product design

🎨 Brand identity & design systems

If you're building in Web3 or need a designer who can translate complex ideas into beautiful, high-converting digital experiences — let's connect.

📩 Message me or book directly through my Contra profile.

Trending

FLORA

Reusable workflows are replacing one-off prompts in creative AI. Share what you're building in FLORA.

portfolioreview

The best portfolios tell a story, not just show a grid. Share yours for feedback.

brandguidelines

Brand guidelines are becoming living systems. What are you building for your clients?

freelancerlife

Freelancer life is wins, pivots, and everything in between. What’s yours right now?

aivideo

AI video tools are moving at warp speed. Which ones are you experimenting with?