The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Back to feedPost

Why I’m moving my AI dev workflow to Local LLMs (and why clients should care) 🤖💻

The "AI coding" hype is everywhere, but as a Senior Developer, I’ve found that the real magic happens when you take the LLM off the cloud and run it on your own hardware.

I’ve been deep-diving into Local LLMs and Agentic coding workflows lately, and it’s completely changed my architectural process. Here’s why this is a game-changer for high-stakes development:

1️⃣ Privacy & Security: For my clients, data is everything. Running models locally (using Ollama/LM Studio) means proprietary logic never leaves the machine. No leaks, no training on sensitive IP.

2️⃣ Zero Latency, Pure Focus: Agentic workflows—where AI agents handle the boilerplate, unit tests, and documentation—allow me to focus 100% on system design and complex logic.

3️⃣ Custom Context: By giving local agents deep context of a specific codebase, they become "Junior Partners" who actually understand the architecture, rather than just guessing.

AI isn't here to replace the developer; it’s here to automate the "noise." A Senior Architect plus a fleet of local agents is the most efficient way to build scalable software in 2026.

Are you still using cloud-only AI, or have you made the switch to a local-first workflow? What’s the biggest "agentic" win you’ve had this week? 👇

The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Related posts

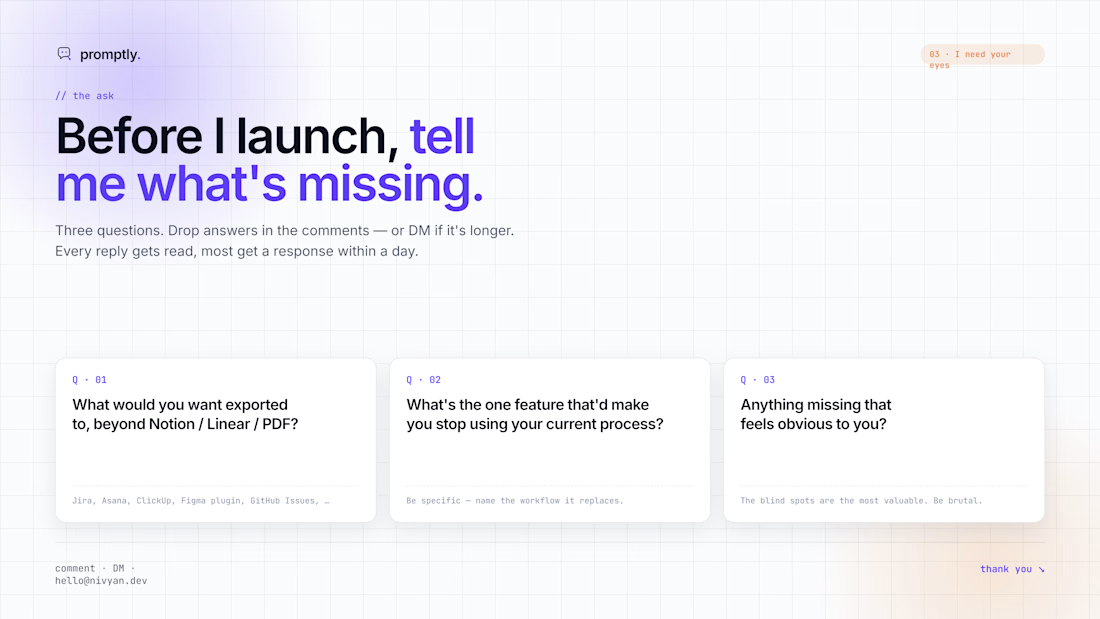

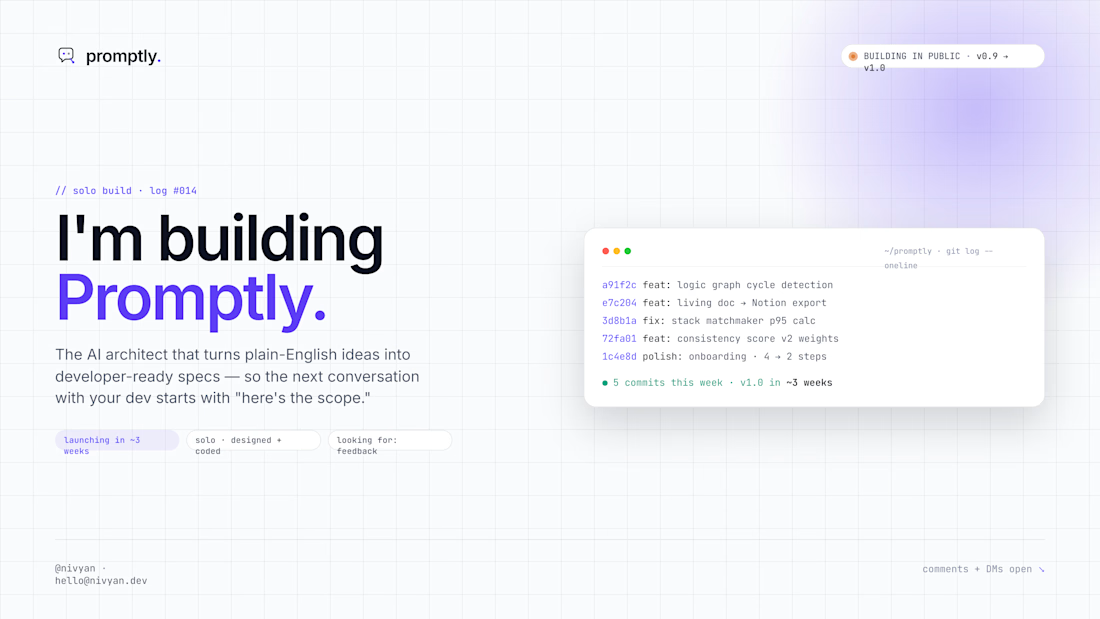

Hey Contra 👋 I've been heads-down building Promptly, and I'd love your eyes on it before our launch.

From my time delivering over 20 software projects as a tech lead, I realized the biggest bottleneck in building an app isn't the code itself. It is communication. Founders speak in ideas, and developers speak in strict logic.

Here is how Promptly works. You just describe your app idea in plain English. The AI actually pushes back, asks the hard questions, catches the vague bits, and automatically writes a developer-ready blueprint as you chat.

The goal is simple. When you hand this document to a developer, the next thing you get back is an accurate, fixed-price quote. No more wasting days trying to draft technical specifications and sitting through endless rounds of discovery calls just to get on the same page.

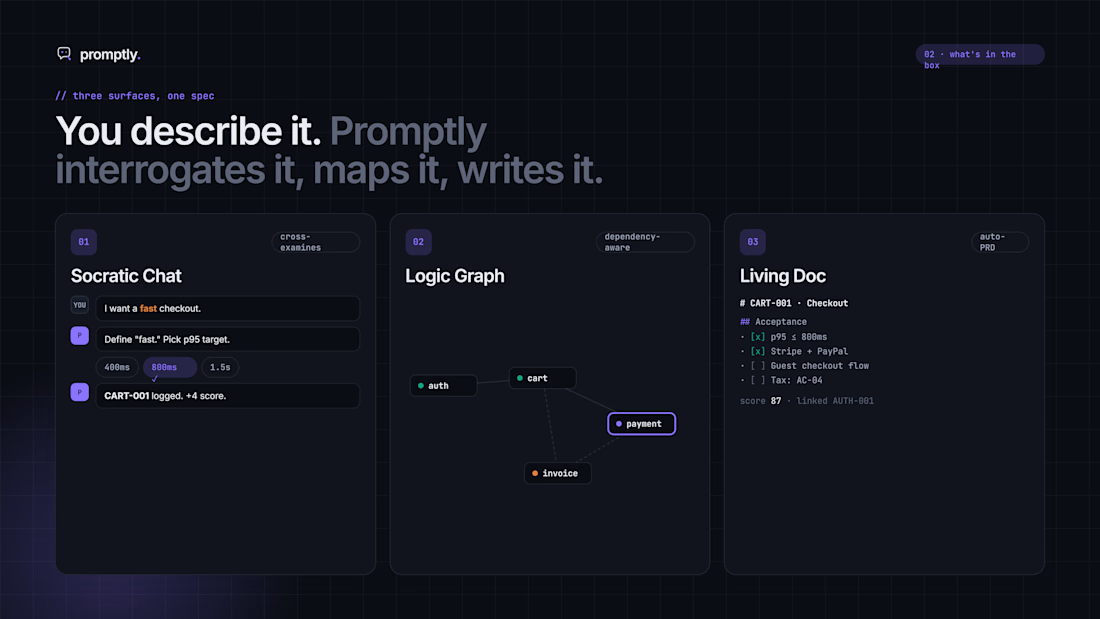

Instead of staring at a blank document, you get three tools that update in real-time:

The Pushback Chat: It refuses to just nod along. If you say "make it fast and secure," it helps you define exactly what that means. It hunts down edge cases before you ever pay someone to fix them.

The Visual Map: A live, interactive diagram showing exactly how your features connect. It catches logic flaws and missing steps before any code is written.

The Living Blueprint: An auto-generated, professional project spec that exports straight to Notion, Linear, or PDF.

Plus, it gives your idea a "Developer-Readiness Score" out of 100 so you know if it is actually ready to build. It even recommends the best tech stack based on your specific budget and complexity.

The core engine and all of these interactive features are fully functional right now, but I am still smoothing out the final rough edges. I am aiming for a public launch in about three weeks.

Where I need your help. I have three quick questions:

Where would you want to export your project blueprint, beyond Notion, Linear, or PDF?

What is the biggest headache you face right now when trying to explain your ideas to developers?

What feels missing? The blind spots are the most valuable to me right now.

Any feedback on new features, improvements, or things I should kill entirely would be hugely appreciated. Drop a comment below.

Thank you for taking a look 🙏

building solo, in public

Product ManagementFull Stack DevelopmentSoftware DevelopmentWeb DevelopmentAI DevelopmentClaudeNext.jsFigma

This is a very interesting concept! Where did you design the UI/UX? I feel like a better choice of font is still out there. Overall good work and clean UI. 🔥

AI-Powered CRM Built To Capture And Qualify Leads

Built a lead qualification and CRM workflow that captures inquiries, filters serious prospects, and helps the team follow up faster.

❌ Before Automation

→Leads were coming in, but the process was not clean.

→New inquiries had to be checked manually.

→Some leads needed a quick follow-up.

→Others were not the right fit.

The team had no easy way to separate serious prospects from low-quality leads.

This created 4 problems:

• Slow lead response

• Manual lead checking

• Missed follow-ups

• No clear CRM visibility

Better short version for design:

✅ After Automation

We built an AI-powered lead qualification and CRM system.

The system captures new leads, verifies the information, qualifies prospects, and sends the right data to the CRM. This gives the team a cleaner pipeline and faster follow-up process.

Now the business can see:

• Who the lead is

• What they need

• How serious they are

• Where they are in the pipeline

• What action should happen next

See The Full Workflow here

AI Is Making Small Teams Dangerous

A few years ago, building a serious product needed a large engineering team.

Now?

A small team with the right AI workflow can move insanely fast.

One developer can:

- generate UI prototypes

- scaffold APIs

- automate testing

- write documentation

- build internal tools

- create marketing assets

The speed difference is becoming unreal.

The companies that adapt early will outperform much bigger competitors.

Not because they have more people.

Because they remove more friction.

#AITools #Automation #TechInnovation #SoftwareDevelopment #AIWorkflow

Yeah absolutely agree, Julia

Trending

Claude

Claude has entered the design space. How are you using Claude Design?

Contra University

Learn from expert creatives how to earn more using next-gen AI tools.

creativeaiflow

Creative AI workflows are evolving. What tools do you use, and what are their strengths and weaknesses?

portfolioreview

The best portfolios tell a story, not just show a grid. Share yours for feedback.

freelancerlife

Freelancer life is wins, pivots, and everything in between. What’s yours right now?