The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Back to feedPost

Yesterday OpenAI released GPT‑Image‑2, and the generative graphics race just sped up again.

The new model finally handles text inside images properly. Posters, banners, UI mockups — the letters no longer “swim,” and both Cyrillic and hieroglyphs are readable. For anyone building interfaces and promo for a global audience, that’s a meaningful step forward.

GPT‑Image‑2 handles complex scenes better, keeps dense layouts from falling apart, and can generate series of related images. It can also pull fresh content from the web before generation, so app screenshots and interfaces look like they do now, not like they did a year ago.

At IZUM.STUDY we’re currently preparing a new large course on front‑end layout. We needed realistic studio shots for promo and covers, so I decided to test GPT‑Image‑2 in a real scenario. The image from the model is attached to this post — just look at the level of detail. The plasticky AI look and glossy lighting are gone; the frame feels much closer to a real studio.

It’s clear the bar for generative visuals has gone up again. The only question now is who and how will integrate these capabilities into real workflows.

How do you plan to use GPT‑Image‑2: interfaces, promo, storyboards? Share your ideas in the comments 👇

The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Related posts

GPT Image 2.0

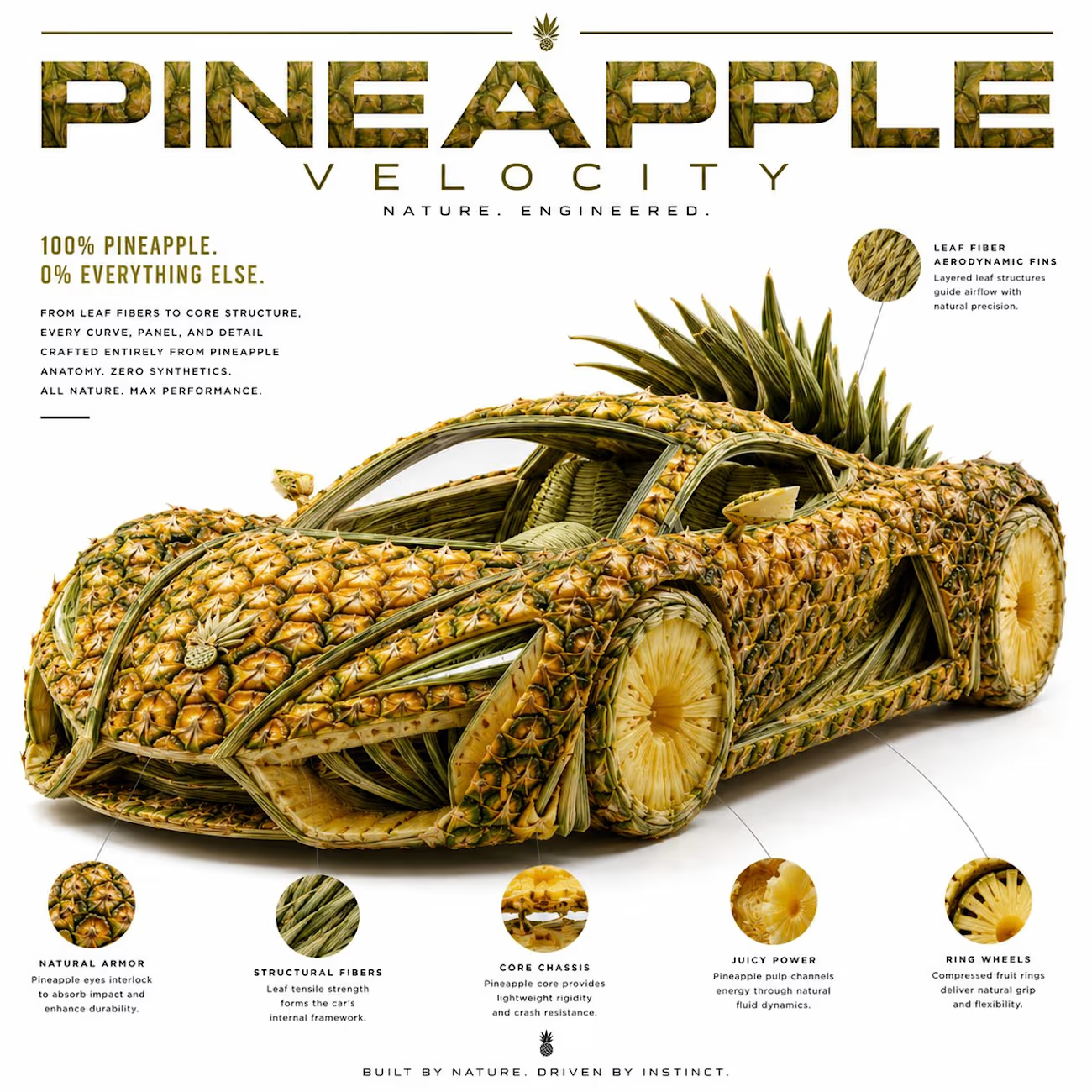

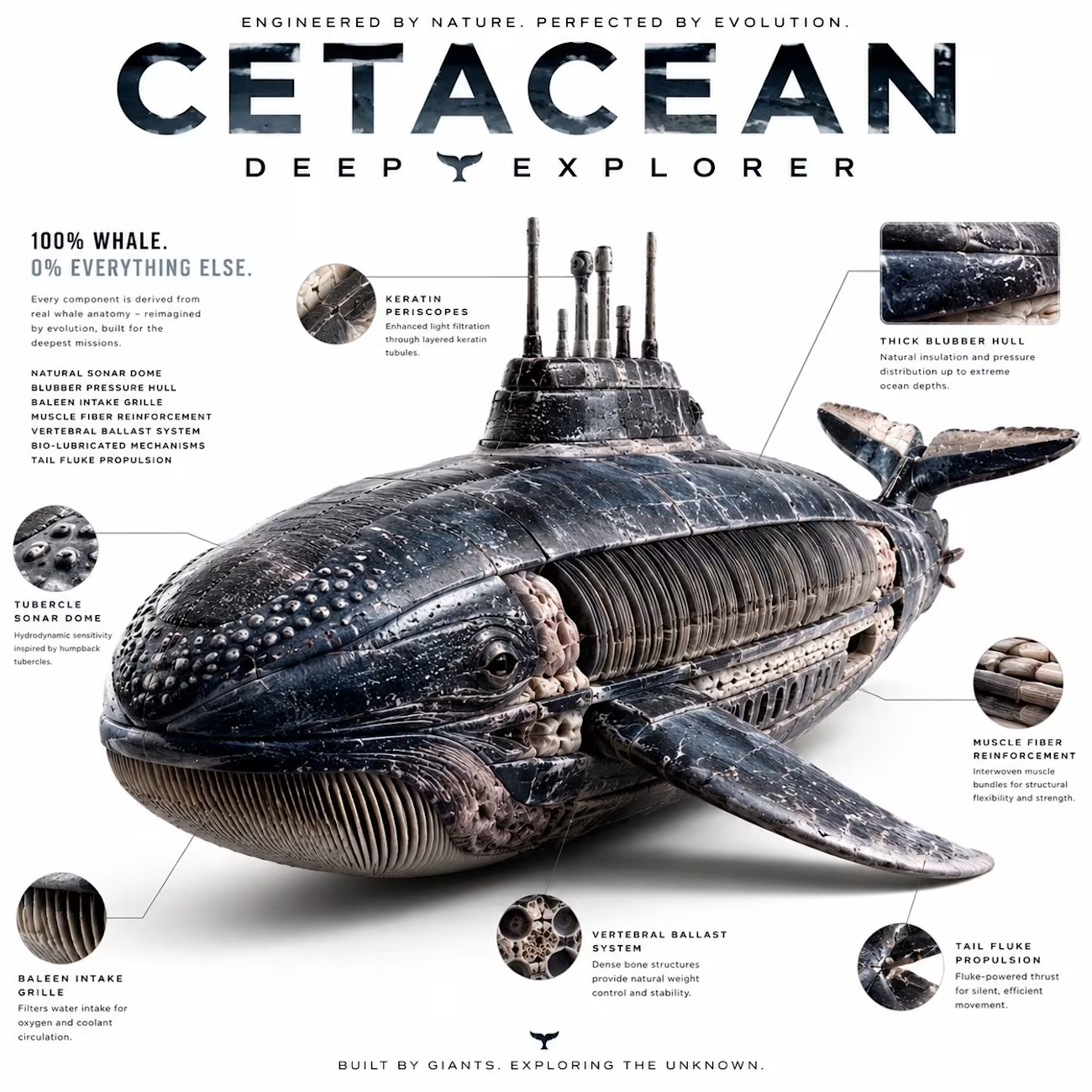

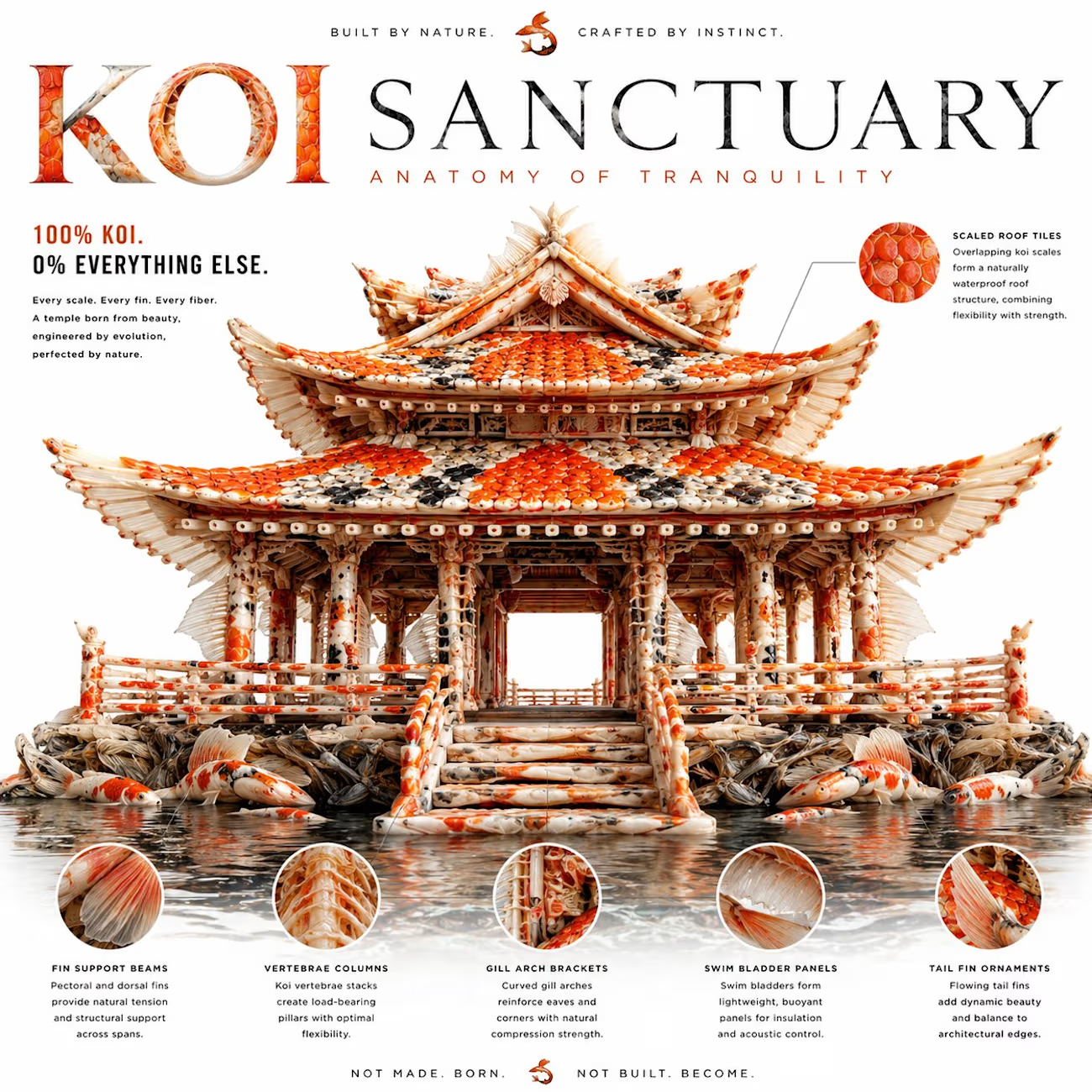

This prompt creates insane editorial posters.

Anything can become anything:

• A temple made from koi fish

• A submarine made from whale anatomy

• A car made from a pineapple

• A motorcycle made from a scorpion

Every detail is built ONLY from the source material.

Prompt

Create a high-end editorial poster of a [Subject 1] constructed entirely from a [Subject 2] materials, components, anatomical structures, and internal elements. Every visible detail must be formed exclusively from authentic parts, textures, layers, patterns, fibers, surfaces, cross-sections, seeds, pulp, veins, stems, skin, flesh, cellular structures, organic geometry, or mechanical components originating only from subject 2, with absolutely no unrelated materials or ordinary surfaces visible anywhere in the image. If subject 2 is a fruit, vegetable, plant, or organic object, the construction must intelligently utilize both the exterior and interior anatomy of the material — including sliced sections, exposed pulp, seeds, fibers, membranes, stems, leaves, juice vesicles, gradients, and microscopic organic textures — seamlessly integrated into the form of subject 1. The subject should remain instantly recognizable from a distance while revealing intricate construction upon closer inspection. The image should create a strong “zoom-in discovery effect,” filled with layered micro-details, visually satisfying assembly logic, hyper-realistic craftsmanship, and believable material logic. The construction should feel physically engineered and artistically intentional, where every curve, edge, panel, contour, and surface is logically assembled from transformed elements of subject 2 while preserving the identity, color language, texture characteristics, and structural DNA of the source material. Include a bold automatically generated editorial headline, refined luxury magazine-style typography, clean mostly white studio background, subtle realistic shadows, premium studio lighting, ultra-detailed macro textures, sophisticated composition, strong visual hierarchy, and a collectible poster aesthetic. Style: high-end editorial advertisement, hyper-detailed craftsmanship, premium product photography, futuristic art direction, ultra-clean composition, realistic textures, highly polished aesthetic, viral social-media poster design, aspect ratio 1:1

Nice work.

The task was to redraw an illustration created by artificial intelligence into a vector format while preserving up to 90% accuracy.

Trending

Claude

Claude has entered the design space. How are you using Claude Design?

Contra University

Learn from expert creatives how to earn more using next-gen AI tools.

creativeaiflow

Creative AI workflows are evolving. What tools do you use, and what are their strengths and weaknesses?

portfolioreview

The best portfolios tell a story, not just show a grid. Share yours for feedback.

freelancerlife

Freelancer life is wins, pivots, and everything in between. What’s yours right now?