The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Back to feedPost

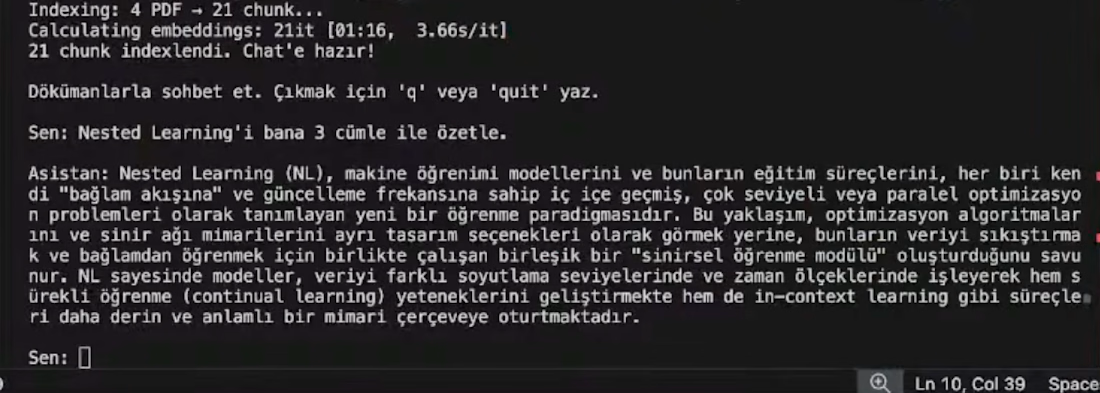

Built a Multimodal RAG system live on stream. From zero to working CLI in one session.

The goal: a terminal-based RAG pipeline using Haystack, Gemini Multimodal Embeddings, and Flash Lite, querying complex research PDFs through a conversational loop.

Three real engineering lessons from the build:

Chunking isn't optional. Raw PDFs exhaust token limits instantly. 6-page splits solved it.

InMemoryDocumentStore works for prototyping, but re-vectorizes on every launch. Persistent DBs like Weaviate are the next step.

Haystack's pipeline is strict. Mis-wiring a retriever to a generator crashes the loop immediately. API contracts matter.

Result: a CLI that maps user queries to the top 4 semantic chunks in RAM and returns grounded, non-hallucinated answers from your own documents.

Every mistake, every fix, on camera.

"Ugurcan Uzunkaya this is seriously impressive! 🔥 Building a full RAG pipeline live on stream in one session is no joke!

That chunking lesson is so real — token limits hit different when you're working with raw PDFs.

And the Haystack pipeline point about API contracts is...

You can reach the code clicking completed work button. Thank you for your kind words!

Here is the youtube link: https://www.youtube.com/watch?v=lqS51ek4azw&list=PLr1CkC0uCFer9qrZ9BNxdcTjpRxp57e0F

great work!

Thank you!

The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Related posts

Build a Social Canvas with Google Gemini specially for a client who couldn't afford my services anymore.

I gave them a couple pre-build presets, which they can load into the canvas easily.

No design skills needed or subscriptions.

They where very happy https://www.winsbits.nl/canvas

Do you think something like this is scalable or would it be just a cool tool?

I don't know, I kind of feel like this is something you can offer to your client once they can't afford you anymore for any reason. Still valuable?

Wow!

Velour textures. Smooth jazz. Timeless style.

My submission for the Kittle Challenge — reimagining a watch ad with a velvet-soft aesthetic and late-night jazz vibes.

Sometimes the best way to sell time…is to slow it down.

Worth mentioning: I'm actually impressed with Kittl. The amount of video-editing work I had to do was wee. Kittl pretty much did the heavy-lifting.

AI VIsual DesignerAI ArtCreative DirectionDaVinci ResolvekittlchallengekittlvideovideoadsGoogle GeminiKittl

Love the mood here. The jazz vibe really comes through.

Been stress testing the latest AI tools. Kling just keeps surprising me. You can swap yourself in video with literally anyone as long as you follow all the correct steps. This was done with a simple video of myself and a photo of the puppet to see what it would do. Here is the result.

You can also hold products in hand, put on any branded clothes, and more. What are your thoughts?

Really impressive. Tools like this are going to completely change how creators prototype ideas and test concepts before full production.

Trending

aivideo

AI video tools are moving at warp speed. Which ones are you experimenting with?

illustration

Handcrafted illustration is bubbling up across the web. What are you drawing lately?

aidesignflow

AI tools are redefining design work. What's your current workflow?

returntonature

Spring is a reset for creativity. What’s inspiring you outside the screen right now?

freelancerlife

Freelancer life is wins, pivots, and everything in between. What’s yours right now?