Convert Prompt To Fine-Tuned ModelFarouq A.

GPT4o and similar models are generalist. Your task is specialized.

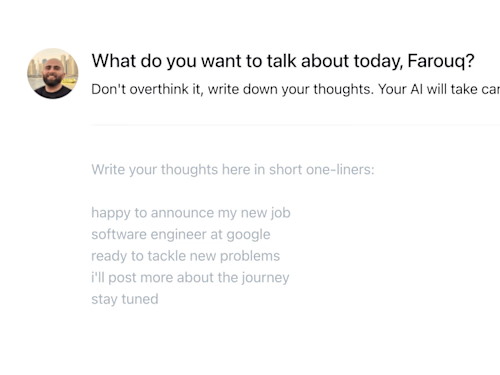

I will fine-tune a model for your specific use-case that is better performing, cheaper, and uses fewer tokens.

What's included

Fine-tuned model for your task

A faster, cheaper, and better performing fine-tuned model for your task.

FAQs

Example work

Farouq's other services

Starting at$500

Duration1 week

Tags

ChatGPT

AI Chatbot Developer

ML Engineer

Prompt Writer

Service provided by

Farouq A. Stockholm, Sweden

- 2

- Followers

Convert Prompt To Fine-Tuned ModelFarouq A.

Starting at$500

Duration1 week

Tags

ChatGPT

AI Chatbot Developer

ML Engineer

Prompt Writer

GPT4o and similar models are generalist. Your task is specialized.

I will fine-tune a model for your specific use-case that is better performing, cheaper, and uses fewer tokens.

What's included

Fine-tuned model for your task

A faster, cheaper, and better performing fine-tuned model for your task.

FAQs

Example work

Farouq's other services

$500