Production RAG System Build — Document Q&A with Hybrid RetrievalCayman Roden

Most RAG implementations fail in production for one of two reasons: retrieval is too shallow to find the right content, or LLM costs spiral once real users start querying at volume. This service addresses both.

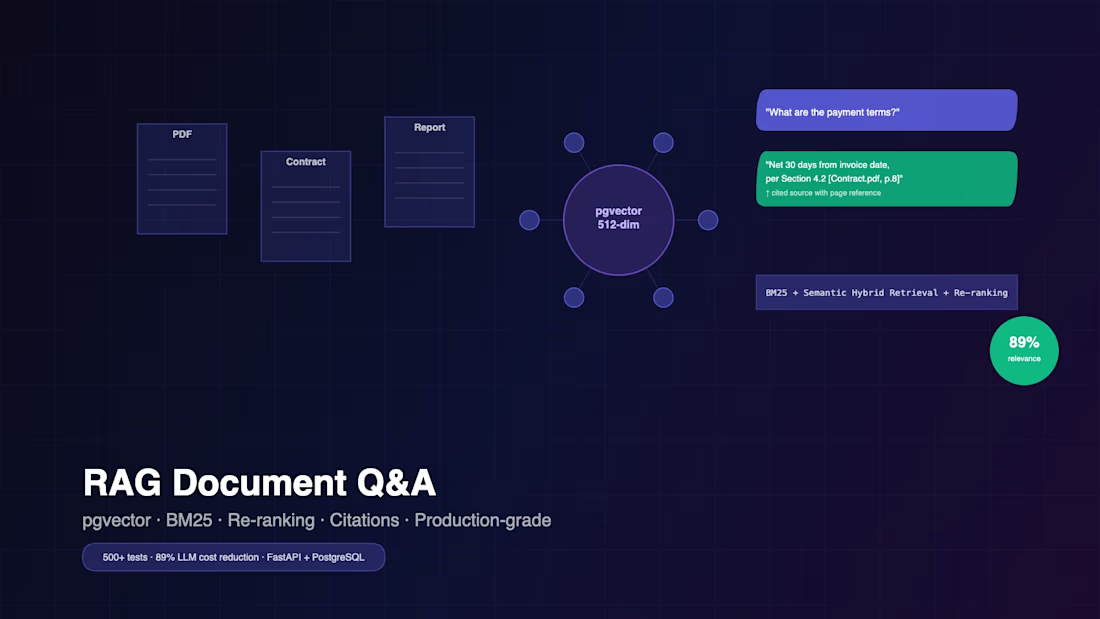

I'll build you a retrieval-augmented generation system grounded in the DocQA Engine architecture — benchmarked to 89% LLM cost reduction and 88% cache hit rate in production. The retrieval layer uses a hybrid approach: BM25 keyword matching, TF-IDF scoring, and dense semantic search combined, then re-ranked with a cross-encoder to maximize answer precision. The caching layer uses a 3-tier Redis strategy (in-memory L1, Redis L2, semantic similarity matching at L3) so repeated and semantically similar queries don't hit the LLM at all.

You get a system that's fast, cost-controlled, and maintainable — not a notebook, not a LangChain boilerplate, not a demo. Every deliverable is production code with tests, documentation, and CI.

What's included: FastAPI backend, hybrid BM25+semantic retrieval (ChromaDB/FAISS), cross-encoder re-ranking, 3-tier Redis cache, REST API with auth + rate limiting, full pytest suite (80%+ coverage), Docker Compose, GitHub Actions CI, Architecture Decision Records, Mermaid diagram, deployment guide, and a 30-minute handoff call.

$1,500 flat. 7-10 business days. Scope locked at kickoff.

Starting at$1,500

Duration2 weeks

Tags

Docker

FastAPI

PostgreSQL

Python

Redis

Machine Learning

Service provided by

Cayman Roden Palm Springs, USA

Production RAG System Build — Document Q&A with Hybrid RetrievalCayman Roden

Starting at$1,500

Duration2 weeks

Tags

Docker

FastAPI

PostgreSQL

Python

Redis

Machine Learning

Most RAG implementations fail in production for one of two reasons: retrieval is too shallow to find the right content, or LLM costs spiral once real users start querying at volume. This service addresses both.

I'll build you a retrieval-augmented generation system grounded in the DocQA Engine architecture — benchmarked to 89% LLM cost reduction and 88% cache hit rate in production. The retrieval layer uses a hybrid approach: BM25 keyword matching, TF-IDF scoring, and dense semantic search combined, then re-ranked with a cross-encoder to maximize answer precision. The caching layer uses a 3-tier Redis strategy (in-memory L1, Redis L2, semantic similarity matching at L3) so repeated and semantically similar queries don't hit the LLM at all.

You get a system that's fast, cost-controlled, and maintainable — not a notebook, not a LangChain boilerplate, not a demo. Every deliverable is production code with tests, documentation, and CI.

What's included: FastAPI backend, hybrid BM25+semantic retrieval (ChromaDB/FAISS), cross-encoder re-ranking, 3-tier Redis cache, REST API with auth + rate limiting, full pytest suite (80%+ coverage), Docker Compose, GitHub Actions CI, Architecture Decision Records, Mermaid diagram, deployment guide, and a 30-minute handoff call.

$1,500 flat. 7-10 business days. Scope locked at kickoff.

$1,500