AI Guardrail & Adversarial Reliability AssessmentAaron House

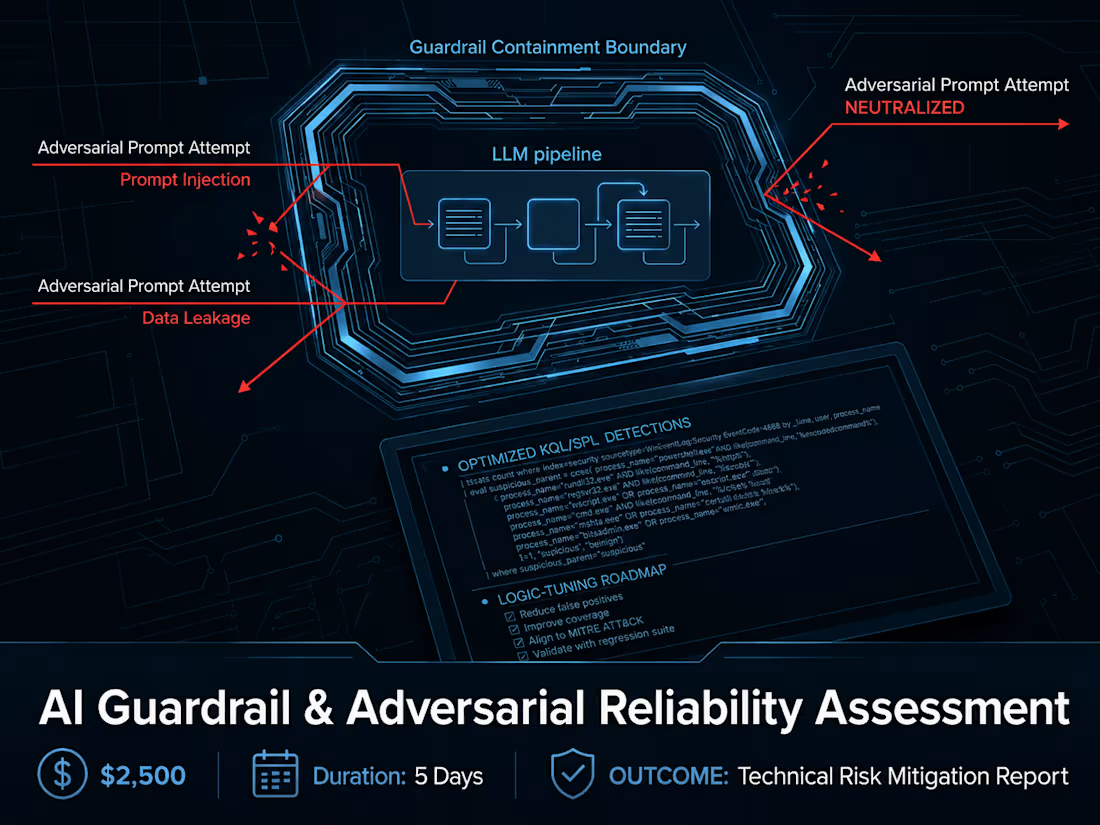

Enterprise LLM and RAG deployments are frequently exposed to prompt injection, data leakage, and hallucination risks that threaten brand integrity. This engagement provides a rigorous, adversarial stress-test of your integrated AI workflows. I execute targeted red-teaming protocols to expose vulnerabilities and validate containment boundaries before deployment.

What You Receive (Deliverable):

A comprehensive technical risk-mitigation report detailing all identified vulnerabilities, validated guardrail configurations, and an actionable roadmap for secure foundation model alignment.

Starting at$2,500

Duration4 days

Tags

Cybersecurity Specialist

Artificial Intelligence

Service provided by

Aaron House Mechanicsville, USA

AI Guardrail & Adversarial Reliability AssessmentAaron House

Starting at$2,500

Duration4 days

Tags

Cybersecurity Specialist

Artificial Intelligence

Enterprise LLM and RAG deployments are frequently exposed to prompt injection, data leakage, and hallucination risks that threaten brand integrity. This engagement provides a rigorous, adversarial stress-test of your integrated AI workflows. I execute targeted red-teaming protocols to expose vulnerabilities and validate containment boundaries before deployment.

What You Receive (Deliverable):

A comprehensive technical risk-mitigation report detailing all identified vulnerabilities, validated guardrail configurations, and an actionable roadmap for secure foundation model alignment.

$2,500