Machine Learning Inference Model Development and DeploymentJavier Aquique

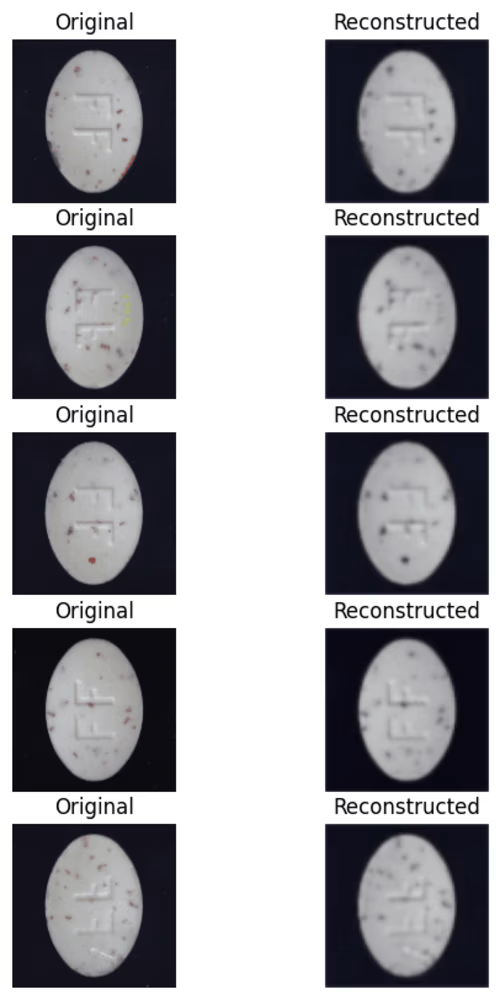

I develop and deploy high-performance machine learning inference models, turning complex data into real-time, actionable predictions. From model optimization to seamless API integration and scalable cloud deployment, I ensure your AI solutions run efficiently and reliably. What sets me apart is my focus on both technical excellence and real-world usability, leveraging my engineering expertise to deliver models that are not just accurate, but also scalable, secure, and ready for production.

What's included

Trained & Optimized ML Model

A fully trained model tailored to your specific use case, optimized for accuracy and performance.

Model Inference AP

A REST or GraphQL API that allows seamless integration of the model into your application or system.

Deployment on Cloud or Edge

Deployment on AWS, GCP, Azure, or on-premises for real-time or batch inference.

Automated Preprocessing Pipeline

A data pipeline that cleans, transforms, and prepares input data before feeding it into the model.

Monitoring & Logging System

A dashboard or logging system to track model performance, latency, and potential drift over time.

Scalability & Performance Optimization

Optimized model inference speed using quantization, model pruning, or hardware acceleration (e.g., GPUs/TPUs).

Security & Access Control

Authentication and authorization mechanisms for secure API access and usage tracking.

Comprehensive Documentation & User Guide

A detailed guide covering API usage, model architecture, deployment setup, and maintenance best practices.

Final Review & Support

A project handoff session and optional post-deployment support for debugging, model retraining, and updates.

Javier's other services

Contact for pricing

Tags

pandas

Python

PyTorch

scikit-learn

TensorFlow

AI Developer

AI Model Developer

ML Engineer

Service provided by

Javier Aquique Alcobendas, Spain

Machine Learning Inference Model Development and DeploymentJavier Aquique

Contact for pricing

Tags

pandas

Python

PyTorch

scikit-learn

TensorFlow

AI Developer

AI Model Developer

ML Engineer

I develop and deploy high-performance machine learning inference models, turning complex data into real-time, actionable predictions. From model optimization to seamless API integration and scalable cloud deployment, I ensure your AI solutions run efficiently and reliably. What sets me apart is my focus on both technical excellence and real-world usability, leveraging my engineering expertise to deliver models that are not just accurate, but also scalable, secure, and ready for production.

What's included

Trained & Optimized ML Model

A fully trained model tailored to your specific use case, optimized for accuracy and performance.

Model Inference AP

A REST or GraphQL API that allows seamless integration of the model into your application or system.

Deployment on Cloud or Edge

Deployment on AWS, GCP, Azure, or on-premises for real-time or batch inference.

Automated Preprocessing Pipeline

A data pipeline that cleans, transforms, and prepares input data before feeding it into the model.

Monitoring & Logging System

A dashboard or logging system to track model performance, latency, and potential drift over time.

Scalability & Performance Optimization

Optimized model inference speed using quantization, model pruning, or hardware acceleration (e.g., GPUs/TPUs).

Security & Access Control

Authentication and authorization mechanisms for secure API access and usage tracking.

Comprehensive Documentation & User Guide

A detailed guide covering API usage, model architecture, deployment setup, and maintenance best practices.

Final Review & Support

A project handoff session and optional post-deployment support for debugging, model retraining, and updates.

Javier's other services

Contact for pricing