AI/ML Data Preparation & Labeling PipelinesAbhiram Kannuri

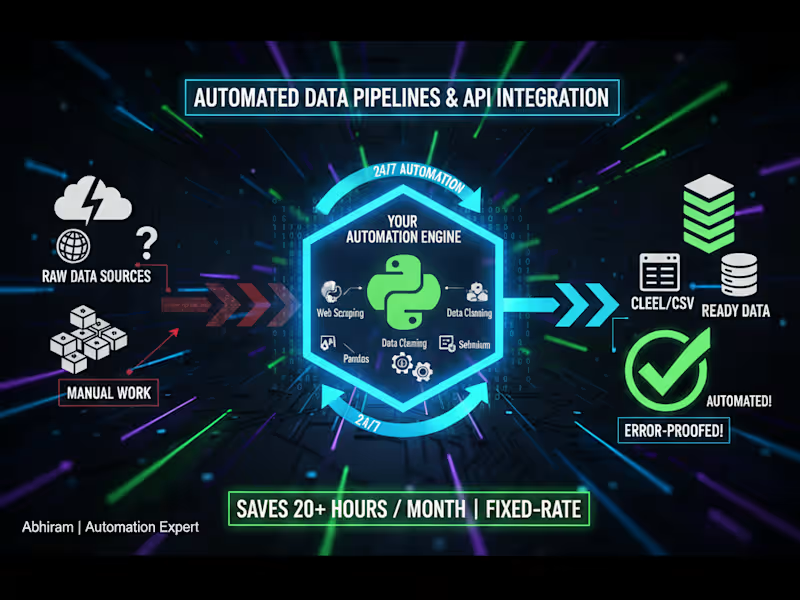

Full pipeline development covering data acquisition (scraping or API), advanced cleaning/feature engineering (using Pandas/Numpy), and delivery of a production-ready data set of up to 10,000 records/items. Includes a final data validation report.

What's included

ML-Ready Data Set (JSON/CSV)

The final structured data set, formatted and cleaned according to the specific requirements of the client's ML model (e.g., text pre-processed, images resized/labeled).

Data Scraping/Cleaning Script

The documented, repeatable Python script that executes the full data acquisition and cleaning logic, allowing the client to refresh the data set in the future.

Validation Report

A PDF or Jupyter Notebook file detailing the data's quality, completeness, and any labeling methodologies used, ensuring transparency and model reliability.

FAQs

Abhiram's other services

Starting at$30 /hr

Tags

Python

PyTorch

scikit-learn

TensorFlow

Variational Autoencoders (VAEs)

AI Developer

AI Model Developer

ML Engineer

Service provided by

Abhiram Kannuri proNormanton, UK

- 1

- Paid projects

- 5.00

- Rating

- 12

- Followers

AI/ML Data Preparation & Labeling PipelinesAbhiram Kannuri

Starting at$30 /hr

Tags

Python

PyTorch

scikit-learn

TensorFlow

Variational Autoencoders (VAEs)

AI Developer

AI Model Developer

ML Engineer

Full pipeline development covering data acquisition (scraping or API), advanced cleaning/feature engineering (using Pandas/Numpy), and delivery of a production-ready data set of up to 10,000 records/items. Includes a final data validation report.

What's included

ML-Ready Data Set (JSON/CSV)

The final structured data set, formatted and cleaned according to the specific requirements of the client's ML model (e.g., text pre-processed, images resized/labeled).

Data Scraping/Cleaning Script

The documented, repeatable Python script that executes the full data acquisition and cleaning logic, allowing the client to refresh the data set in the future.

Validation Report

A PDF or Jupyter Notebook file detailing the data's quality, completeness, and any labeling methodologies used, ensuring transparency and model reliability.

FAQs

Abhiram's other services

$30 /hr