Data ScienceAkshay Agrawal

🤖 Generative AI & Agentic Document Intelligence

Architected an end-to-end Generative AI pipeline using transformer-based LLMs, enabling intelligent document categorization and context-aware semantic search across enterprise repositories

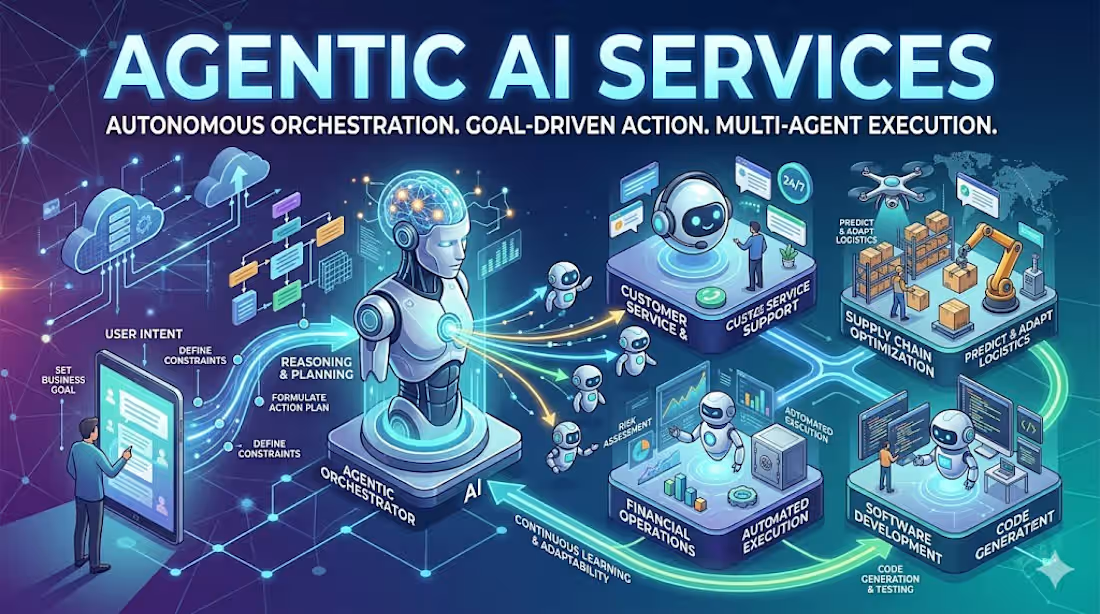

Engineered agentic AI workflows with multi-step reasoning chains, implementing MCP (Model Context Protocol) and A2A (Agent-to-Agent) interaction patterns for autonomous document processing

Deployed a hybrid RAG architecture combining FAISS vector similarity search with structured queries, improving LLM grounding and contextual retrieval accuracy by reducing irrelevant results

Containerized and deployed scalable model inference pipelines using Docker on Azure Kubernetes Service (AKS), ensuring production-grade availability and horizontal scalability

🧠 LLM Fine-tuning & NLP Classification

Fine-tuned BERT and GPT-family models for multi-class ticket classification, automating customer support routing across thousands of daily conversations

Built automated CI/CD workflows for model training and deployment using Kubeflow Pipelines, cutting manual deployment effort and enabling continuous model improvement

Optimized model inference for production SLAs and integrated via REST APIs into live customer-facing microservices, reducing response latency

📊 Predictive AI & Cloud Deployment (GCP)

Designed and deployed customer purchase prediction models on Google Cloud AI Platform, enabling real-time inference for high-throughput transactional workloads

Engineered features from large-scale MySQL datasets using Python (Pandas, NumPy), applying statistical modeling and EDA to surface key behavioral signals driving purchase decisions

Starting at$10 /hr

Tags

AI Engineer

Data Analyst

Data Scientist

generative ai

Service provided by

Akshay Agrawal Delhi, India

Data ScienceAkshay Agrawal

Starting at$10 /hr

Tags

AI Engineer

Data Analyst

Data Scientist

generative ai

🤖 Generative AI & Agentic Document Intelligence

Architected an end-to-end Generative AI pipeline using transformer-based LLMs, enabling intelligent document categorization and context-aware semantic search across enterprise repositories

Engineered agentic AI workflows with multi-step reasoning chains, implementing MCP (Model Context Protocol) and A2A (Agent-to-Agent) interaction patterns for autonomous document processing

Deployed a hybrid RAG architecture combining FAISS vector similarity search with structured queries, improving LLM grounding and contextual retrieval accuracy by reducing irrelevant results

Containerized and deployed scalable model inference pipelines using Docker on Azure Kubernetes Service (AKS), ensuring production-grade availability and horizontal scalability

🧠 LLM Fine-tuning & NLP Classification

Fine-tuned BERT and GPT-family models for multi-class ticket classification, automating customer support routing across thousands of daily conversations

Built automated CI/CD workflows for model training and deployment using Kubeflow Pipelines, cutting manual deployment effort and enabling continuous model improvement

Optimized model inference for production SLAs and integrated via REST APIs into live customer-facing microservices, reducing response latency

📊 Predictive AI & Cloud Deployment (GCP)

Designed and deployed customer purchase prediction models on Google Cloud AI Platform, enabling real-time inference for high-throughput transactional workloads

Engineered features from large-scale MySQL datasets using Python (Pandas, NumPy), applying statistical modeling and EDA to surface key behavioral signals driving purchase decisions

$10 /hr