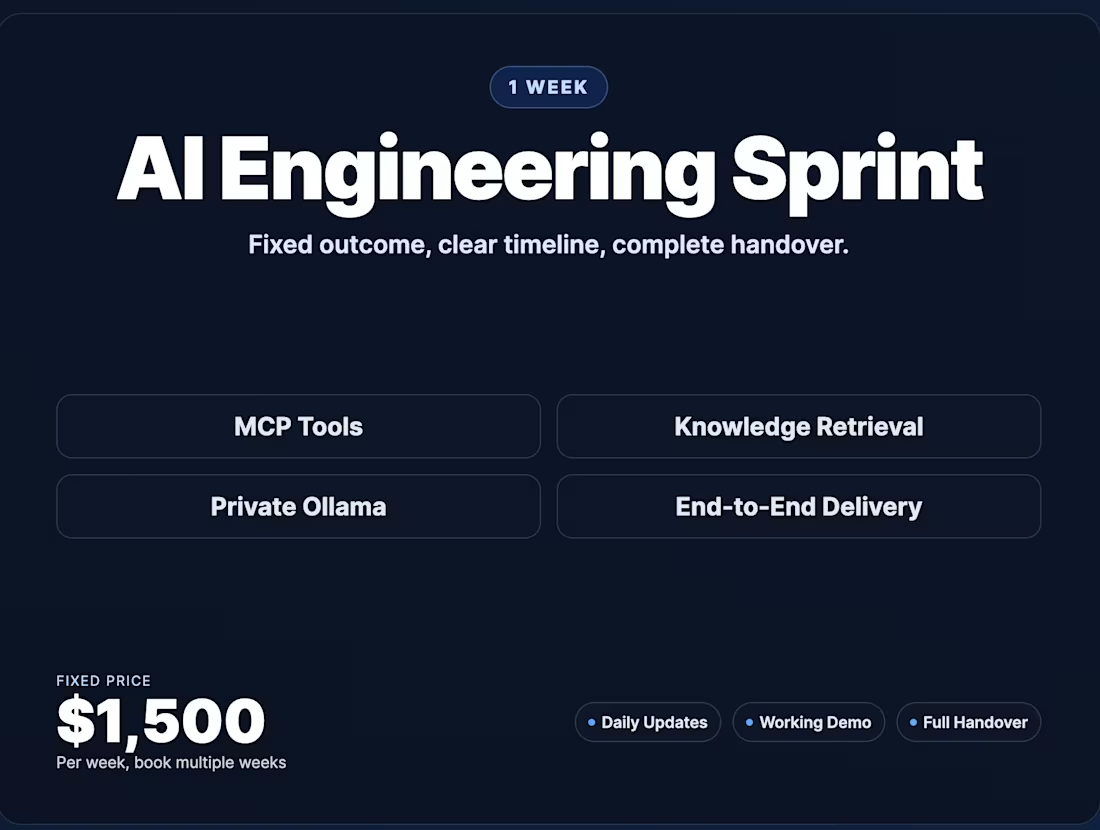

AI Engineering Sprint: 1-Week Delivery SprintAbdul Ghafoor

I deliver one working AI engineering milestone per week—end-to-end from design through handover.

We scope focus on Day 1 (MCP integrations, RAG pipelines, local AI setup, or full-stack), then execute Days 2-4 with validation and handover on Day 5.

You get working code, documentation, and a clear acceptance checklist.

What You'll Get (Every Sprint)

By Friday, you receive:

Working implementation for your chosen focus area

Runnable code with setup/deployment steps

Test results and performance benchmarks

Demo walkthrough

Roadmap for next sprint (if needed)

Definition of done: Code runs reliably in your environment and meets the acceptance checklist you sign off on Day 1.

Typical Focus Areas (Choose 1 Per Sprint)

Focus: AI Tool Access (MCP Server)

For teams needing: AI assistants to safely call internal workflows

Example: Your AI assistant can look up customer records, check order status, and restart jobs—all via safe MCP tools.

You get: 3 production MCP tools, auth pattern, working integration.

Focus: Knowledge Retrieval (Qdrant + Ingestion)

For teams that have: Scattered docs, wikis, tickets needing one searchable layer

Example: Support AI finds the exact help article instead of guessing. Sales AI retrieves relevant customer history.

You get: Indexed Qdrant collection, ingestion pipeline, retrieval tuning, test queries.

Focus: Private AI Model (Ollama Setup)

For teams needing: Privacy, cost control, or low latency for AI inference

Example: Your team's AI assistant runs locally on your server—no reliance on OpenAI, no per-token costs.

You get: Ollama runtime, model tuning, API integration, performance benchmarks.

Focus: Full AI Stack (End-to-End)

For teams ready to build: One complete workflow from data → retrieval → AI decision → action

Example: Ops team gets AI assistant that reads alerts → searches incident history → suggests fix → posts to Slack.

You get: Minimal vertical slice connecting MCP, retrieval, and LLM for one business workflow.

What Happens Each Sprint

Day 1: Scope definition + acceptance criteria locked

Days 2-4: Daily progress updates (Slack/email)

Day 5: Demo + handover package (code, docs, next steps)

Out of Scope

Full enterprise rollout in one sprint

Unlimited scope additions mid-sprint

24/7 on-call support (post-handover)

FAQs

Starting at$1,500

Duration1 week

Tags

MCP

Ollama

Python

RAG

DevOps Engineer

Artificial Intelligence

Qdrant

Service provided by

Abdul Ghafoor proDublin, Ireland

- 30

- Followers

AI Engineering Sprint: 1-Week Delivery SprintAbdul Ghafoor

Starting at$1,500

Duration1 week

Tags

MCP

Ollama

Python

RAG

DevOps Engineer

Artificial Intelligence

Qdrant

I deliver one working AI engineering milestone per week—end-to-end from design through handover.

We scope focus on Day 1 (MCP integrations, RAG pipelines, local AI setup, or full-stack), then execute Days 2-4 with validation and handover on Day 5.

You get working code, documentation, and a clear acceptance checklist.

What You'll Get (Every Sprint)

By Friday, you receive:

Working implementation for your chosen focus area

Runnable code with setup/deployment steps

Test results and performance benchmarks

Demo walkthrough

Roadmap for next sprint (if needed)

Definition of done: Code runs reliably in your environment and meets the acceptance checklist you sign off on Day 1.

Typical Focus Areas (Choose 1 Per Sprint)

Focus: AI Tool Access (MCP Server)

For teams needing: AI assistants to safely call internal workflows

Example: Your AI assistant can look up customer records, check order status, and restart jobs—all via safe MCP tools.

You get: 3 production MCP tools, auth pattern, working integration.

Focus: Knowledge Retrieval (Qdrant + Ingestion)

For teams that have: Scattered docs, wikis, tickets needing one searchable layer

Example: Support AI finds the exact help article instead of guessing. Sales AI retrieves relevant customer history.

You get: Indexed Qdrant collection, ingestion pipeline, retrieval tuning, test queries.

Focus: Private AI Model (Ollama Setup)

For teams needing: Privacy, cost control, or low latency for AI inference

Example: Your team's AI assistant runs locally on your server—no reliance on OpenAI, no per-token costs.

You get: Ollama runtime, model tuning, API integration, performance benchmarks.

Focus: Full AI Stack (End-to-End)

For teams ready to build: One complete workflow from data → retrieval → AI decision → action

Example: Ops team gets AI assistant that reads alerts → searches incident history → suggests fix → posts to Slack.

You get: Minimal vertical slice connecting MCP, retrieval, and LLM for one business workflow.

What Happens Each Sprint

Day 1: Scope definition + acceptance criteria locked

Days 2-4: Daily progress updates (Slack/email)

Day 5: Demo + handover package (code, docs, next steps)

Out of Scope

Full enterprise rollout in one sprint

Unlimited scope additions mid-sprint

24/7 on-call support (post-handover)

FAQs

$1,500