Custom Edge-AI & Local Visual Intelligence SolutionsMedhansh Singh

I bridge the gap between complex AI research and functional business tools. Utilizing local LLMs like Moondream via Ollama, I build private, high-performance visual auditing systems that eliminate recurring cloud API costs while ensuring total data privacy.

From automated quality control to real-time inventory tracking, I deliver end-to-end AI applications optimized for restricted local hardware.

What's included

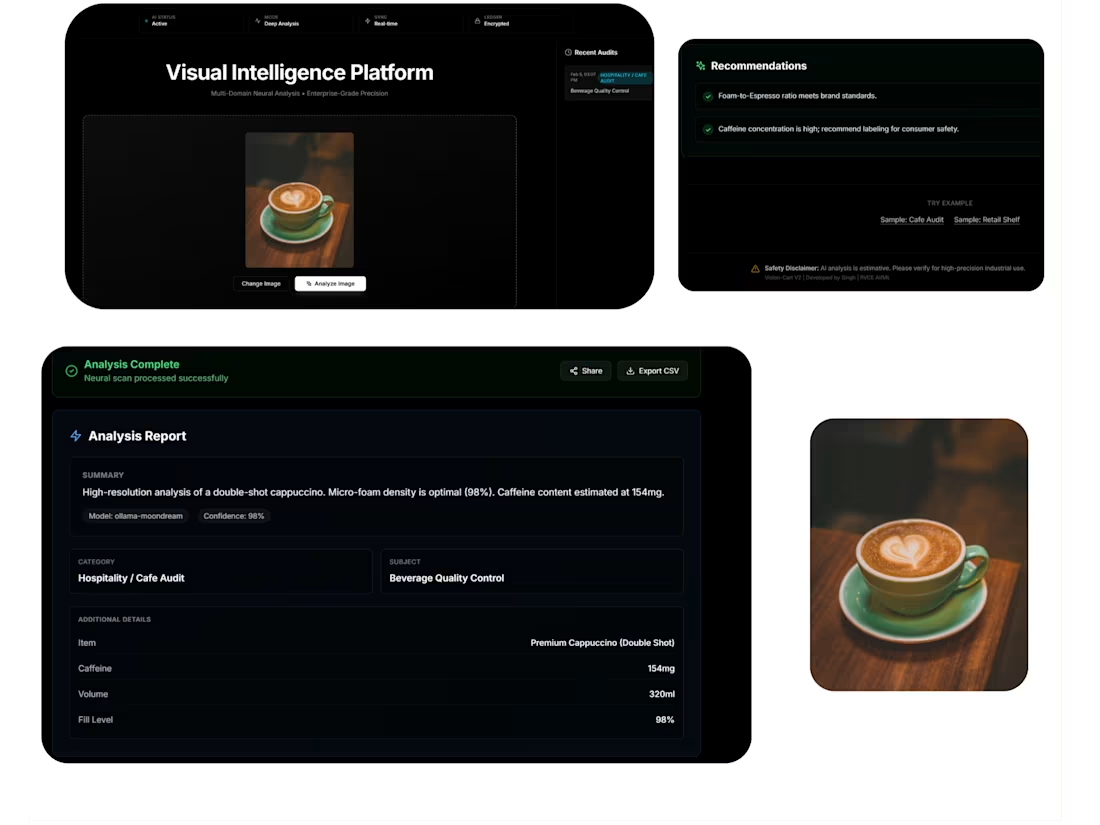

Private Vision AI Dashboard

A custom-built, high-performance web dashboard (React/FastAPI) integrated with local vision models (Moondream) for private, real-time image auditing.

Optimized Local Inference Pipeline

A fully configured local environment using Ollama, optimized to run complex visual feature extraction on restricted hardware (2GB VRAM).

FAQs

Custom Edge-AI & Local Visual Intelligence SolutionsMedhansh Singh

I bridge the gap between complex AI research and functional business tools. Utilizing local LLMs like Moondream via Ollama, I build private, high-performance visual auditing systems that eliminate recurring cloud API costs while ensuring total data privacy.

From automated quality control to real-time inventory tracking, I deliver end-to-end AI applications optimized for restricted local hardware.

What's included

Private Vision AI Dashboard

A custom-built, high-performance web dashboard (React/FastAPI) integrated with local vision models (Moondream) for private, real-time image auditing.

Optimized Local Inference Pipeline

A fully configured local environment using Ollama, optimized to run complex visual feature extraction on restricted hardware (2GB VRAM).

FAQs

$500