Annotation, Evaluation, and QAUSMAN AKINTOBI

AI Data Labeling & Annotation Services

I provide high-quality data labeling and annotation services for AI and machine learning teams that need accurate, consistent, and well-documented training data. With experience across multiple annotation platforms including Label Studio, and a background working with companies like Turing, Micro1, and TrainAI/RWS, I bring both technical understanding and labeling rigor to every project.

What I can help with:

Computer Vision Annotation

Bounding box annotation, object detection labeling, image classification, and segmentation tasks for CV pipelines. I'm comfortable working with complex and edge-case scenarios that require careful judgment rather than just pattern-following.

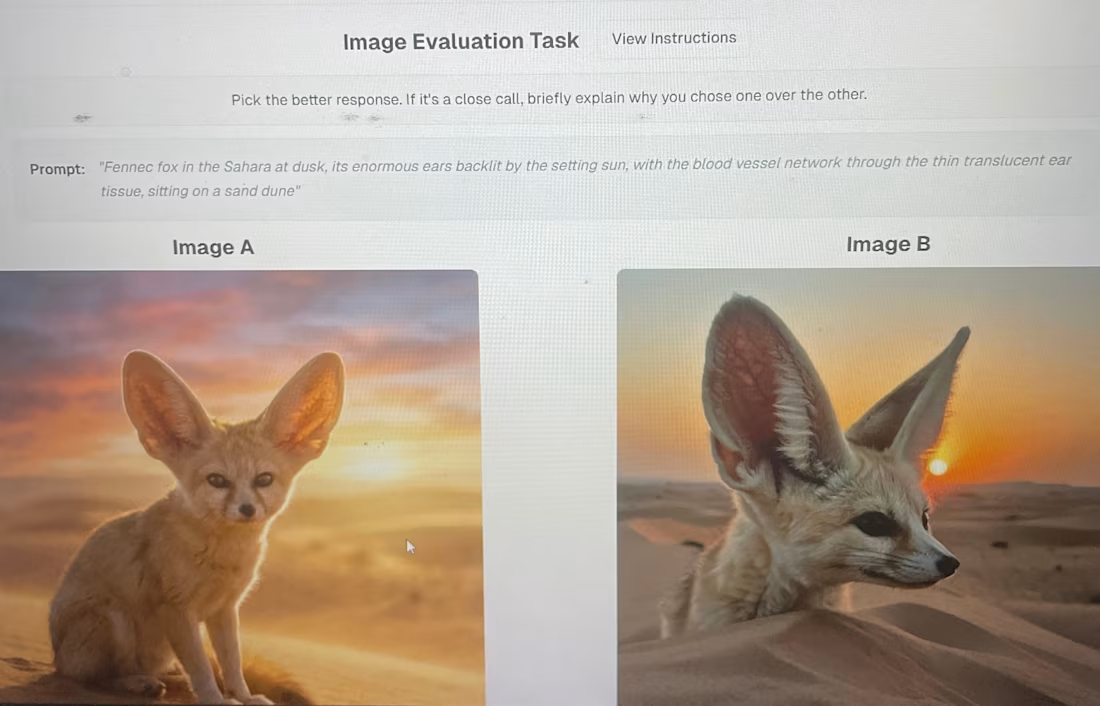

Multimodal & VLM Evaluation

Rating and evaluating model outputs derived from combined image and text inputs across criteria such as instruction following, content retention, visual quality, AI-generated content detection, and response coherence. Useful for teams fine-tuning or evaluating Vision Language Models and Multimodal LLMs.

RLHF & Model Feedback

Human feedback collection and response ranking for reinforcement learning pipelines. I've worked on RLHF tasks that require nuanced preference judgments, making me well-suited for alignment and fine-tuning projects.

RAG Pipeline Annotation

Labeling and evaluating retrieval-augmented generation outputs, including relevance scoring, context grounding, and answer quality assessment — helping teams ensure their RAG systems return accurate and useful responses.

NLP & Text Annotation

Named entity recognition, intent classification, sentiment labeling, and text categorization for NLP models. I've also built independent NLP projects including a context-aware feedback classification system, giving me a developer-level understanding of how annotations translate into model behavior.

Quality Assurance & Process Improvement

Beyond labeling, I've reviewed and QA'd other annotators' work, flagged inconsistencies, and contributed to refining labeling guidelines. I can serve as a senior annotator or QA reviewer on larger teams.

Why work with me:

I understand what the data is for. Whether you're training a detection model, fine-tuning a multimodal LLM, or evaluating a RAG pipeline, I bring context to the work that improves output quality and reduces rework. I'm detail-oriented, follow complex rubrics accurately, and communicate clearly when guidelines need clarification.

Ideal for startups, AI labs, and research teams that need a reliable, experienced annotator who can hit the ground running.

USMAN's other services

Starting at$15 /hr

Tags

Labelbox

Python

TensorFlow

TypeScript

Service provided by

USMAN AKINTOBI Nigeria

- 5.00

- Rating

- 6

- Followers

Annotation, Evaluation, and QAUSMAN AKINTOBI

AI Data Labeling & Annotation Services

I provide high-quality data labeling and annotation services for AI and machine learning teams that need accurate, consistent, and well-documented training data. With experience across multiple annotation platforms including Label Studio, and a background working with companies like Turing, Micro1, and TrainAI/RWS, I bring both technical understanding and labeling rigor to every project.

What I can help with:

Computer Vision Annotation

Bounding box annotation, object detection labeling, image classification, and segmentation tasks for CV pipelines. I'm comfortable working with complex and edge-case scenarios that require careful judgment rather than just pattern-following.

Multimodal & VLM Evaluation

Rating and evaluating model outputs derived from combined image and text inputs across criteria such as instruction following, content retention, visual quality, AI-generated content detection, and response coherence. Useful for teams fine-tuning or evaluating Vision Language Models and Multimodal LLMs.

RLHF & Model Feedback

Human feedback collection and response ranking for reinforcement learning pipelines. I've worked on RLHF tasks that require nuanced preference judgments, making me well-suited for alignment and fine-tuning projects.

RAG Pipeline Annotation

Labeling and evaluating retrieval-augmented generation outputs, including relevance scoring, context grounding, and answer quality assessment — helping teams ensure their RAG systems return accurate and useful responses.

NLP & Text Annotation

Named entity recognition, intent classification, sentiment labeling, and text categorization for NLP models. I've also built independent NLP projects including a context-aware feedback classification system, giving me a developer-level understanding of how annotations translate into model behavior.

Quality Assurance & Process Improvement

Beyond labeling, I've reviewed and QA'd other annotators' work, flagged inconsistencies, and contributed to refining labeling guidelines. I can serve as a senior annotator or QA reviewer on larger teams.

Why work with me:

I understand what the data is for. Whether you're training a detection model, fine-tuning a multimodal LLM, or evaluating a RAG pipeline, I bring context to the work that improves output quality and reduces rework. I'm detail-oriented, follow complex rubrics accurately, and communicate clearly when guidelines need clarification.

Ideal for startups, AI labs, and research teams that need a reliable, experienced annotator who can hit the ground running.

USMAN's other services

$15 /hr