RAG System & Vector Search SetupAbrar Mohtasim

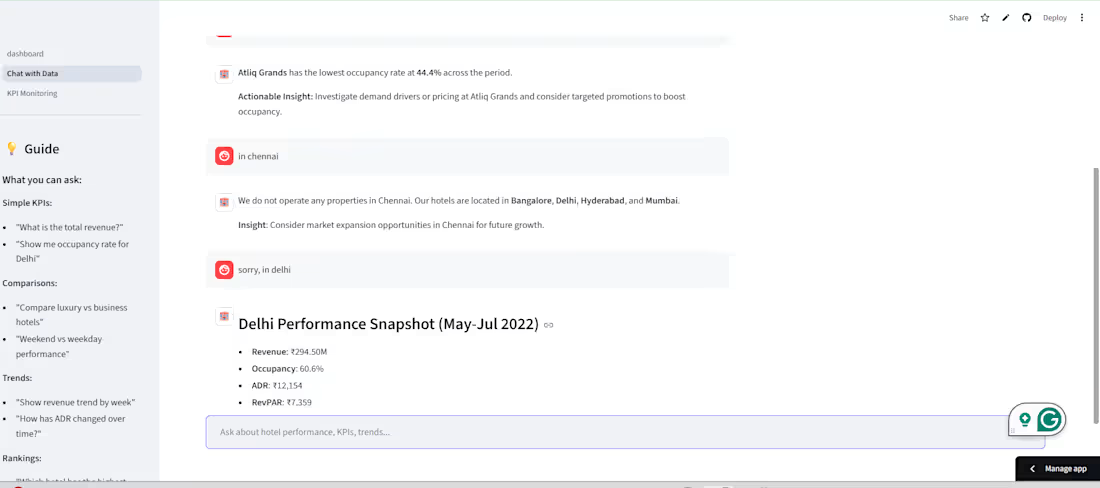

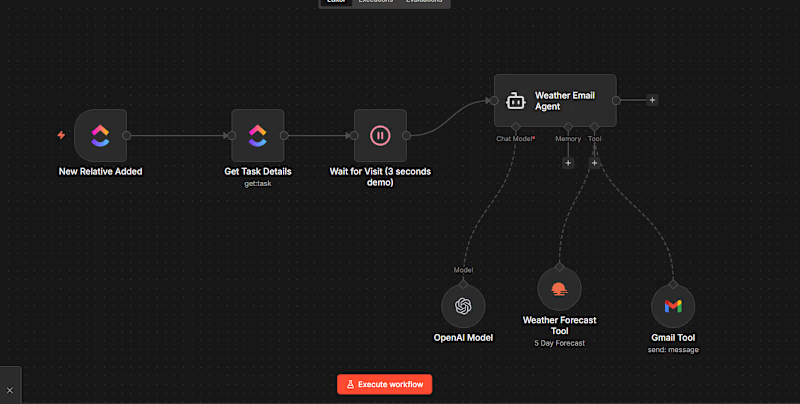

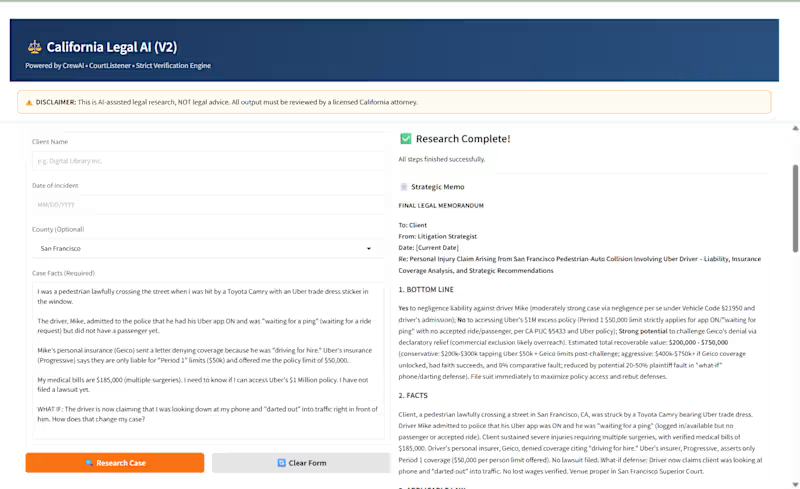

I design and deploy Retrieval-Augmented Generation pipelines that let your LLM answer questions from your own documents, databases, or knowledge bases — accurately, with citations, and without hallucinating. From embedding strategy to query routing, I handle the full pipeline.

What's included:

Document Ingestion & Chunking Strategy

Process your PDFs, Word docs, CSVs, or web content into optimized chunks for retrieval. Includes cleaning, metadata tagging, and chunking strategies tuned to your document type and query patterns.

Embedding & Vector Database Setup

Generate embeddings using top models (OpenAI, Sentence-Transformers) and store them in a vector database of your choice — FAISS for local, Pinecone or Weaviate for cloud-scale. Includes index configuration and similarity tuning.

Query Routing & Context Retrieval

Build smart retrieval logic that selects the right documents for each query. Includes hybrid search (vector + keyword), re-ranking, and context window management for accurate LLM grounding.

RAG Pipeline Integration with LLM

Wire the retrieval layer to your chosen LLM with a grounding prompt that keeps answers anchored to retrieved context. Outputs include source citations so users can verify every answer.

Evaluation & Performance Testing

Test retrieval accuracy, answer relevance, and hallucination rate on real queries from your dataset. Iterate until the system meets your quality threshold with documented results.

Tags:

RAG LangChain LlamaIndex Pinecone FAISS Weaviate Embeddings Vector Database AI DeveloperStarting at$600

Duration1 week

Tags

LangChain

AI Agent Developer

AI Agent Engineer

AI Developer

AI Engineer

llm fine tuning

pinecone

Service provided by

Abrar Mohtasim Chattogram, Bangladesh

RAG System & Vector Search SetupAbrar Mohtasim

Starting at$600

Duration1 week

Tags

LangChain

AI Agent Developer

AI Agent Engineer

AI Developer

AI Engineer

llm fine tuning

pinecone

I design and deploy Retrieval-Augmented Generation pipelines that let your LLM answer questions from your own documents, databases, or knowledge bases — accurately, with citations, and without hallucinating. From embedding strategy to query routing, I handle the full pipeline.

What's included:

Document Ingestion & Chunking Strategy

Process your PDFs, Word docs, CSVs, or web content into optimized chunks for retrieval. Includes cleaning, metadata tagging, and chunking strategies tuned to your document type and query patterns.

Embedding & Vector Database Setup

Generate embeddings using top models (OpenAI, Sentence-Transformers) and store them in a vector database of your choice — FAISS for local, Pinecone or Weaviate for cloud-scale. Includes index configuration and similarity tuning.

Query Routing & Context Retrieval

Build smart retrieval logic that selects the right documents for each query. Includes hybrid search (vector + keyword), re-ranking, and context window management for accurate LLM grounding.

RAG Pipeline Integration with LLM

Wire the retrieval layer to your chosen LLM with a grounding prompt that keeps answers anchored to retrieved context. Outputs include source citations so users can verify every answer.

Evaluation & Performance Testing

Test retrieval accuracy, answer relevance, and hallucination rate on real queries from your dataset. Iterate until the system meets your quality threshold with documented results.

Tags:

RAG LangChain LlamaIndex Pinecone FAISS Weaviate Embeddings Vector Database AI Developer$600