I will make your end to end machine learning projectBadar Masood

Searching for Machine Learning Developer?

Congratulations! You have reached the right place

Machine Learning Services Offered:

1. Predictive Modeling: Constructing models to forecast future events or trends based on historical data.

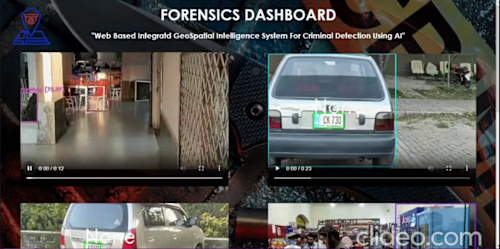

2. Image and Video Analysis: Developing models for object recognition, pattern detection, and classification in images and videos.

3. Natural Language Processing: Building models to comprehend and process human language, including sentiment analysis, text classification, and named entity recognition.

4. Anomaly Detection: Developing models to identify irregular or unexpected data patterns.

5. Recommender Systems: Constructing models to provide personalized recommendations to users based on their preferences and behavior.

6. Fraud Detection: Developing models to detect and prevent fraudulent activities like credit card fraud, insurance fraud, and money laundering.

7. Time Series Forecasting: Building models to predict future values in a time series using historical data.

8. Optimization: Developing models to enhance processes and systems, such as scheduling, resource allocation, and logistics.

9. Risk Assessment: Constructing models to evaluate and quantify risks across various domains like finance, insurance, and healthcare.

10. Decision-Making Support: Developing models to aid decision-making processes in domains like marketing, sales, and operations.

Data Engineering Services Offered:

1. Data Ingestion and Extraction: Extracting and ingesting data from diverse sources.

2. Data Storage Solutions: Implementing databases and data warehouses for efficient data storage.

3. Data Processing and Transformation: Utilizing tools like Apache Spark and Apache Flink for data processing and transformation.

4. Data Security and Privacy: Implementing measures like encryption and access control to ensure data security and privacy.

5. Data Visualization and Reporting: Creating visualizations and reports to facilitate data interpretation.

6. Performance Tuning and Optimization: Optimizing data systems for improved performance.

Experience Highlights as an ML Engineer:

- Proficiency in various machine learning techniques including supervised, unsupervised, and reinforcement learning.

- Expertise in time series forecasting techniques such as RNNs and LSTMs.

- Competence in deep learning frameworks and architectures like ANN, CNN, RNN, and GANs.

- Experience in computer vision tasks including object detection.

- Proficient in solving classification, regression, and clustering problems.

- Skilled in data analysis using tools like Pandas and Numpy.

- Proficient in data visualization using Matplotlib and Seaborn.

- Experience with dimensionality reduction techniques like PCA and LDA.

- Knowledgeable in reinforcement learning concepts including policy and reward.

- Capable of addressing prediction-related problems using a variety of models including linear regression, logistic regression, decision trees, SVM, Naive Bayes, KNN, KMeans, random forest, gradient boosting, among others.

What's included

Machine Learning Deliverables

The outcomes of a machine learning endeavor may vary according to its scale and specifications. Nevertheless, typical deliverables I can furnish include:

- A meticulously crafted and trained machine learning model

- Metrics to assess the model's accuracy and efficacy

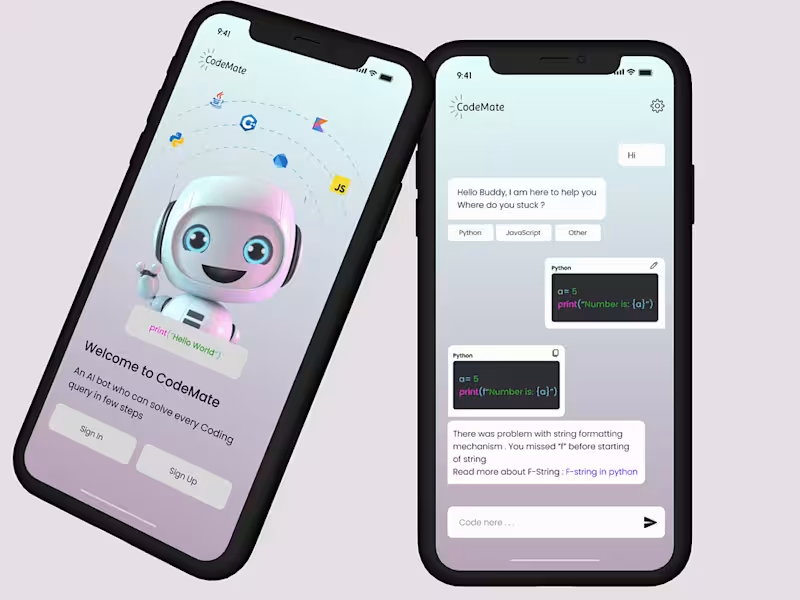

- An intuitive interface for user interaction with the model

- Comprehensive documentation of the machine learning process, encompassing data preprocessing and feature selection

- Presentation of model outcomes and insights, featuring visualizations and reports

- Provision of code and scripts to seamlessly integrate the model into new or existing systems

- Ongoing maintenance and technical assistance for the model

- Suggestions for enhancing and optimizing the model further

- Educational materials and training resources tailored for users of the model

Natural Language Processing Deliverables

The outcomes of a natural language processing (NLP) project can fluctuate based on its extent and prerequisites. Nonetheless, some standard deliverables I can supply include:

- A meticulously crafted and trained NLP model, such as a text classifier, named entity recognizer, or sentiment analysis model.

- Metrics to gauge the precision and effectiveness of the model.

- An intuitive interface for user interaction with the NLP model.

- Thorough documentation of the NLP process, covering data preprocessing, feature selection, and model training.

- Presentation of the model's results and insights, featuring visualizations and reports.

- Provision of code and scripts to seamlessly integrate the NLP model into new or existing systems.

- Continuous maintenance and technical assistance for the NLP model.

- Suggestions for further enhancing and optimizing the NLP model.

- Educational resources and training materials tailored for users of the NLP model.

- Illustrative examples and demonstration datasets to showcase the capabilities of the NLP model.

Data Engineering Deliverables

The deliverables can differ based on the specific needs of the project. Nevertheless, here are some typical deliverables that I am equipped to provide:

- Creation of data pipelines for efficiently ingesting, processing, and storing data from diverse sources.

- Implementation of data warehousing solutions tailored for large-scale data storage and retrieval.

- Generation of data quality reports to validate the accuracy and completeness of the data.

- Establishment of robust data security and privacy policies to safeguard sensitive information.

- Development of performance metrics and monitoring systems to track the efficiency of data systems.

- Preparation of comprehensive documentation detailing the data architecture, processes, and systems.

- Provision of technical support and ongoing maintenance services for data systems.

Data Integration and ETL Pipelines

The deliverables for designing and implementing pipelines for extracting, transforming, and loading (ETL) data from various sources into a centralized data warehouse or data lake can indeed vary based on the project's scope and requirements. However, here are some common deliverables that I can offer:

1. **Data Architecture Design**:

- Designing scalable, reliable, and secure data architectures.

- Selecting appropriate database systems (relational, NoSQL, time-series, etc.) and storage solutions (data lakes, data warehouses).

- Architecting data pipelines for both batch and real-time processing.

2. **Data Integration**:

- Developing ETL (Extract, Transform, Load) pipelines to consolidate data from multiple sources into a centralized repository.

- Implementing data ingestion frameworks for streaming data and batch data processing.

- Creating data APIs for seamless integration of data across systems.

3. **Data Quality Management**:

- Establishing data quality frameworks to ensure accuracy, completeness, and consistency of data.

- Implementing data validation, cleansing, and deduplication processes.

- Monitoring data quality and generating quality reports.

4. **Data Governance and Compliance**:

- Developing data governance policies and procedures.

- Ensuring data compliance with regulatory requirements (e.g., GDPR, HIPAA).

- Implementing data security measures, including encryption, masking, and access controls.

5. **Data Warehouse and Data Lake Development**:

- Designing and implementing data warehousing solutions.

- Building and managing data lakes for storing structured and unstructured data.

- Optimizing data storage for performance and cost efficiency.

6. **Data Analytics and Reporting Infrastructure**:

- Setting up analytics platforms and tools.

- Developing reporting databases, OLAP cubes, and data marts.

- Creating dashboards and reports for business intelligence (BI) purposes.

7. **Cloud Data Engineering**:

- Migrating data infrastructure to the cloud.

- Leveraging cloud-native services for data processing, storage, and analytics (AWS, Google Cloud, Azure).

- Implementing serverless data processing architectures.

8. **Performance Tuning and Optimization**:

- Analyzing and optimizing data storage and retrieval processes.

- Tuning ETL processes and database queries for performance.

- Implementing caching and indexing strategies to improve system performance.

9. **Data Disaster Recovery and Backup**:

- Designing and implementing data backup and recovery strategies.

- Ensuring high availability and fault tolerance of data systems.

- Conducting disaster recovery drills and maintaining recovery documentation.

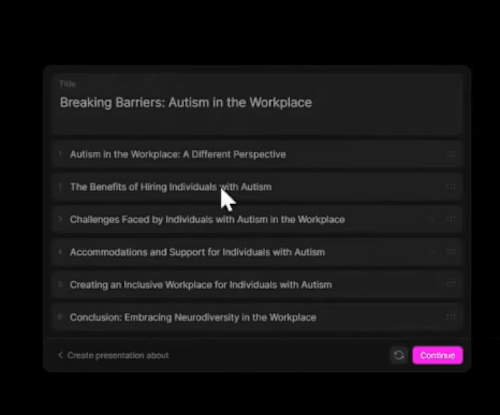

Example work

Badar's other services

Starting at$25 /hr

Tags

Cloud Storage

Java

MongoDB

Python

SQL

AI Developer

Fullstack Engineer

Web Developer

Service provided by

Badar Masood Multan, Pakistan

- 1

- Followers

I will make your end to end machine learning projectBadar Masood

Starting at$25 /hr

Tags

Cloud Storage

Java

MongoDB

Python

SQL

AI Developer

Fullstack Engineer

Web Developer

Searching for Machine Learning Developer?

Congratulations! You have reached the right place

Machine Learning Services Offered:

1. Predictive Modeling: Constructing models to forecast future events or trends based on historical data.

2. Image and Video Analysis: Developing models for object recognition, pattern detection, and classification in images and videos.

3. Natural Language Processing: Building models to comprehend and process human language, including sentiment analysis, text classification, and named entity recognition.

4. Anomaly Detection: Developing models to identify irregular or unexpected data patterns.

5. Recommender Systems: Constructing models to provide personalized recommendations to users based on their preferences and behavior.

6. Fraud Detection: Developing models to detect and prevent fraudulent activities like credit card fraud, insurance fraud, and money laundering.

7. Time Series Forecasting: Building models to predict future values in a time series using historical data.

8. Optimization: Developing models to enhance processes and systems, such as scheduling, resource allocation, and logistics.

9. Risk Assessment: Constructing models to evaluate and quantify risks across various domains like finance, insurance, and healthcare.

10. Decision-Making Support: Developing models to aid decision-making processes in domains like marketing, sales, and operations.

Data Engineering Services Offered:

1. Data Ingestion and Extraction: Extracting and ingesting data from diverse sources.

2. Data Storage Solutions: Implementing databases and data warehouses for efficient data storage.

3. Data Processing and Transformation: Utilizing tools like Apache Spark and Apache Flink for data processing and transformation.

4. Data Security and Privacy: Implementing measures like encryption and access control to ensure data security and privacy.

5. Data Visualization and Reporting: Creating visualizations and reports to facilitate data interpretation.

6. Performance Tuning and Optimization: Optimizing data systems for improved performance.

Experience Highlights as an ML Engineer:

- Proficiency in various machine learning techniques including supervised, unsupervised, and reinforcement learning.

- Expertise in time series forecasting techniques such as RNNs and LSTMs.

- Competence in deep learning frameworks and architectures like ANN, CNN, RNN, and GANs.

- Experience in computer vision tasks including object detection.

- Proficient in solving classification, regression, and clustering problems.

- Skilled in data analysis using tools like Pandas and Numpy.

- Proficient in data visualization using Matplotlib and Seaborn.

- Experience with dimensionality reduction techniques like PCA and LDA.

- Knowledgeable in reinforcement learning concepts including policy and reward.

- Capable of addressing prediction-related problems using a variety of models including linear regression, logistic regression, decision trees, SVM, Naive Bayes, KNN, KMeans, random forest, gradient boosting, among others.

What's included

Machine Learning Deliverables

The outcomes of a machine learning endeavor may vary according to its scale and specifications. Nevertheless, typical deliverables I can furnish include:

- A meticulously crafted and trained machine learning model

- Metrics to assess the model's accuracy and efficacy

- An intuitive interface for user interaction with the model

- Comprehensive documentation of the machine learning process, encompassing data preprocessing and feature selection

- Presentation of model outcomes and insights, featuring visualizations and reports

- Provision of code and scripts to seamlessly integrate the model into new or existing systems

- Ongoing maintenance and technical assistance for the model

- Suggestions for enhancing and optimizing the model further

- Educational materials and training resources tailored for users of the model

Natural Language Processing Deliverables

The outcomes of a natural language processing (NLP) project can fluctuate based on its extent and prerequisites. Nonetheless, some standard deliverables I can supply include:

- A meticulously crafted and trained NLP model, such as a text classifier, named entity recognizer, or sentiment analysis model.

- Metrics to gauge the precision and effectiveness of the model.

- An intuitive interface for user interaction with the NLP model.

- Thorough documentation of the NLP process, covering data preprocessing, feature selection, and model training.

- Presentation of the model's results and insights, featuring visualizations and reports.

- Provision of code and scripts to seamlessly integrate the NLP model into new or existing systems.

- Continuous maintenance and technical assistance for the NLP model.

- Suggestions for further enhancing and optimizing the NLP model.

- Educational resources and training materials tailored for users of the NLP model.

- Illustrative examples and demonstration datasets to showcase the capabilities of the NLP model.

Data Engineering Deliverables

The deliverables can differ based on the specific needs of the project. Nevertheless, here are some typical deliverables that I am equipped to provide:

- Creation of data pipelines for efficiently ingesting, processing, and storing data from diverse sources.

- Implementation of data warehousing solutions tailored for large-scale data storage and retrieval.

- Generation of data quality reports to validate the accuracy and completeness of the data.

- Establishment of robust data security and privacy policies to safeguard sensitive information.

- Development of performance metrics and monitoring systems to track the efficiency of data systems.

- Preparation of comprehensive documentation detailing the data architecture, processes, and systems.

- Provision of technical support and ongoing maintenance services for data systems.

Data Integration and ETL Pipelines

The deliverables for designing and implementing pipelines for extracting, transforming, and loading (ETL) data from various sources into a centralized data warehouse or data lake can indeed vary based on the project's scope and requirements. However, here are some common deliverables that I can offer:

1. **Data Architecture Design**:

- Designing scalable, reliable, and secure data architectures.

- Selecting appropriate database systems (relational, NoSQL, time-series, etc.) and storage solutions (data lakes, data warehouses).

- Architecting data pipelines for both batch and real-time processing.

2. **Data Integration**:

- Developing ETL (Extract, Transform, Load) pipelines to consolidate data from multiple sources into a centralized repository.

- Implementing data ingestion frameworks for streaming data and batch data processing.

- Creating data APIs for seamless integration of data across systems.

3. **Data Quality Management**:

- Establishing data quality frameworks to ensure accuracy, completeness, and consistency of data.

- Implementing data validation, cleansing, and deduplication processes.

- Monitoring data quality and generating quality reports.

4. **Data Governance and Compliance**:

- Developing data governance policies and procedures.

- Ensuring data compliance with regulatory requirements (e.g., GDPR, HIPAA).

- Implementing data security measures, including encryption, masking, and access controls.

5. **Data Warehouse and Data Lake Development**:

- Designing and implementing data warehousing solutions.

- Building and managing data lakes for storing structured and unstructured data.

- Optimizing data storage for performance and cost efficiency.

6. **Data Analytics and Reporting Infrastructure**:

- Setting up analytics platforms and tools.

- Developing reporting databases, OLAP cubes, and data marts.

- Creating dashboards and reports for business intelligence (BI) purposes.

7. **Cloud Data Engineering**:

- Migrating data infrastructure to the cloud.

- Leveraging cloud-native services for data processing, storage, and analytics (AWS, Google Cloud, Azure).

- Implementing serverless data processing architectures.

8. **Performance Tuning and Optimization**:

- Analyzing and optimizing data storage and retrieval processes.

- Tuning ETL processes and database queries for performance.

- Implementing caching and indexing strategies to improve system performance.

9. **Data Disaster Recovery and Backup**:

- Designing and implementing data backup and recovery strategies.

- Ensuring high availability and fault tolerance of data systems.

- Conducting disaster recovery drills and maintaining recovery documentation.

Example work

Badar's other services

$25 /hr