The MLOps Pipeline KickstartAli Beheshti

Stop letting your AI experiments vanish into unorganized notebooks. I help data teams transition from manual, high-risk workflows to production-grade MLOps pipelines. This service builds the "Traceability Skeleton" your business needs to ensure that every model you build is 100% reproducible, auditable, and ready for the real world.

What’s Included (The Deliverables)

I will implement a standard, scalable foundation for one of your core ML projects within 14 days:

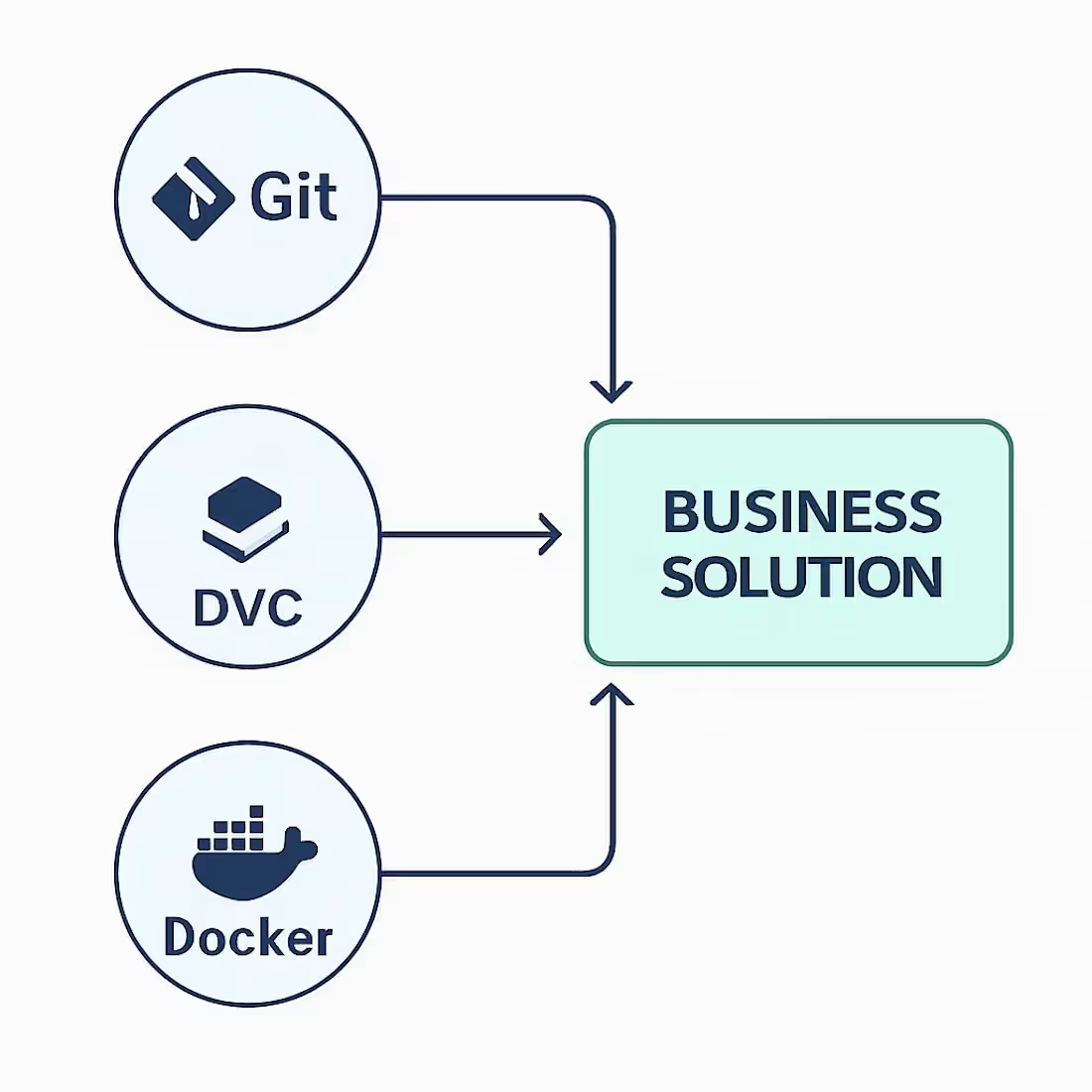

Data Lineage Setup: Full implementation of DVC to version your datasets, ensuring you never lose track of which data produced which model.

Experiment Dashboard: Integration of MLflow to track every hyperparameter, metric, and training run visually.

Validation Gates: Custom Python scripts to automatically audit your data for "silent failures" (nulls, schema shifts) before training begins.

Infrastructure as Code: A tailored Dockerfile and CI/CD configuration (GitHub Actions) to ensure your model runs perfectly on any server, every time.

The Process

Audit: A 60-minute deep dive into your current data stack and business goals.

Scaffolding: I build the directory structure and initialize your Git/DVC repositories.

Pipeline Construction: I write the

dvc.yaml and train.py logic to automate your specific workflow.Handover: A final walkthrough and documentation so your team can run

dvc repro with total confidence.Who This is For

Startups moving their first model from research to a live app.

Lean Tech Teams who need DevOps rigor without hiring a full-time engineer.

Legacy Enterprises modernizing CSV/Excel-based data workflows into professional pipelines.

Ali's other services

Starting at$1,500

Duration2 weeks

Tags

Docker

Python

Data Engineer

Data Engineer

Artificial Intelligence

DevOps Engineering

MLflow, DVC

MLOps, CI/CD

Software Engineering

Service provided by

Ali Beheshti Herndon, USA

The MLOps Pipeline KickstartAli Beheshti

Starting at$1,500

Duration2 weeks

Tags

Docker

Python

Data Engineer

Data Engineer

Artificial Intelligence

DevOps Engineering

MLflow, DVC

MLOps, CI/CD

Software Engineering

Stop letting your AI experiments vanish into unorganized notebooks. I help data teams transition from manual, high-risk workflows to production-grade MLOps pipelines. This service builds the "Traceability Skeleton" your business needs to ensure that every model you build is 100% reproducible, auditable, and ready for the real world.

What’s Included (The Deliverables)

I will implement a standard, scalable foundation for one of your core ML projects within 14 days:

Data Lineage Setup: Full implementation of DVC to version your datasets, ensuring you never lose track of which data produced which model.

Experiment Dashboard: Integration of MLflow to track every hyperparameter, metric, and training run visually.

Validation Gates: Custom Python scripts to automatically audit your data for "silent failures" (nulls, schema shifts) before training begins.

Infrastructure as Code: A tailored Dockerfile and CI/CD configuration (GitHub Actions) to ensure your model runs perfectly on any server, every time.

The Process

Audit: A 60-minute deep dive into your current data stack and business goals.

Scaffolding: I build the directory structure and initialize your Git/DVC repositories.

Pipeline Construction: I write the

dvc.yaml and train.py logic to automate your specific workflow.Handover: A final walkthrough and documentation so your team can run

dvc repro with total confidence.Who This is For

Startups moving their first model from research to a live app.

Lean Tech Teams who need DevOps rigor without hiring a full-time engineer.

Legacy Enterprises modernizing CSV/Excel-based data workflows into professional pipelines.

Ali's other services

$1,500