LLM Application DevelopmentAbrar Mohtasim

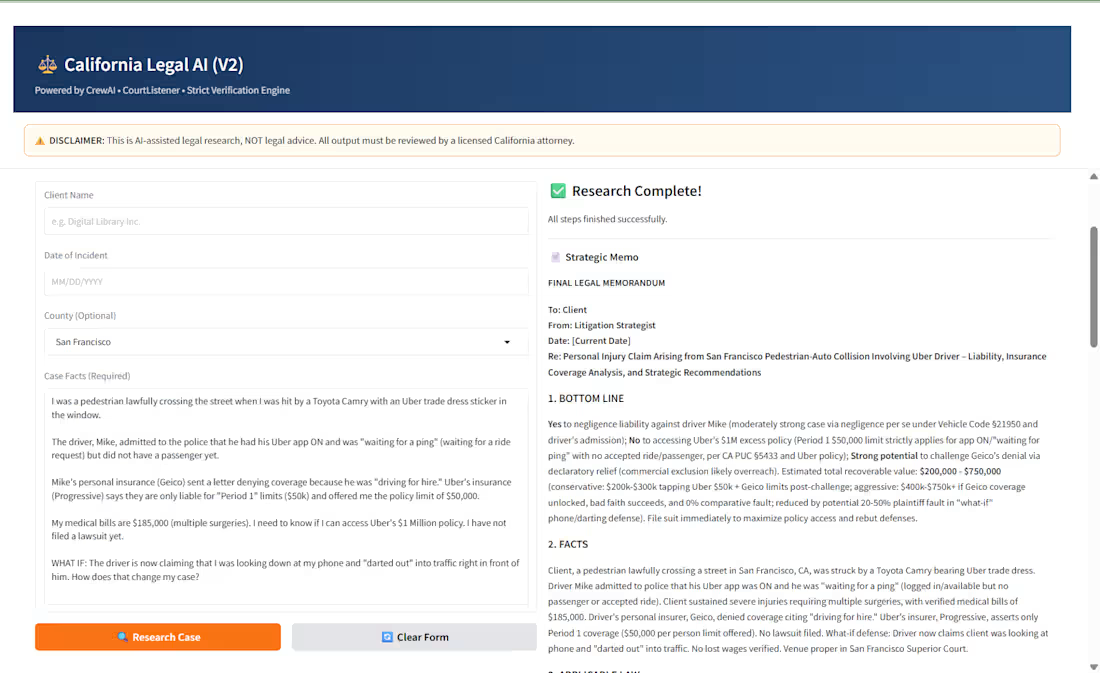

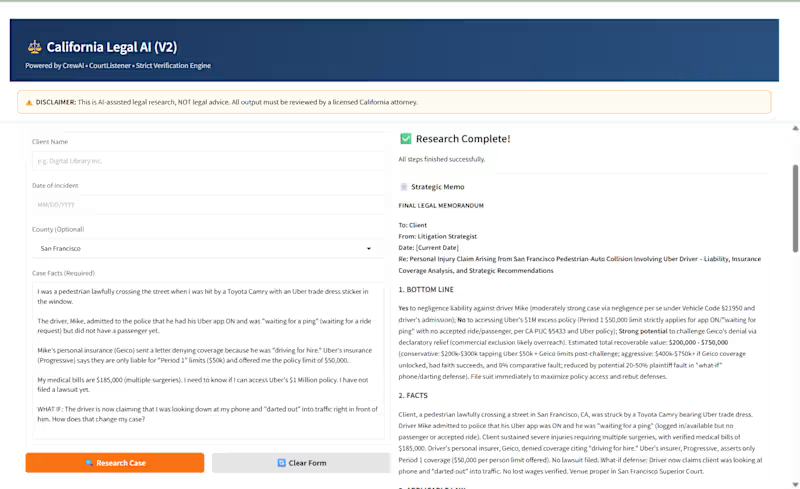

I build production-ready LLM-powered applications — not just demos. From tool calling and prompt engineering to anti-hallucination systems and grounded generation, I design AI apps that behave reliably in real business environments and deliver live via Gradio, Streamlit, or API.

What's included:

LLM Integration & Prompt Engineering

Connect your app to leading LLMs (GPT-4, Claude, Mistral, Phi) via OpenRouter or native APIs. Includes system prompt design, few-shot examples, and output formatting for consistent, high-quality responses.

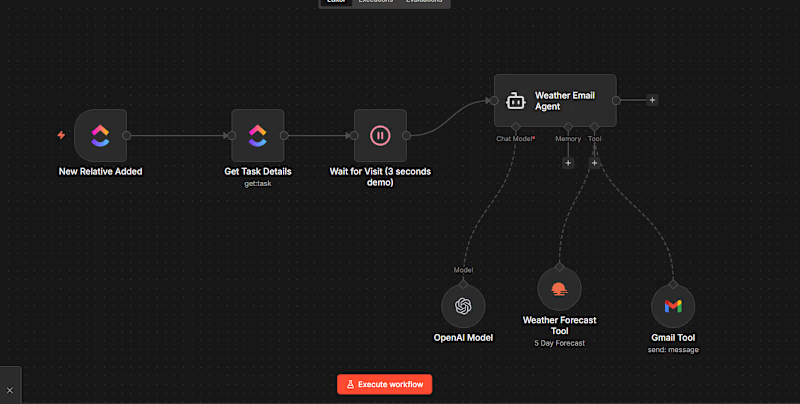

Tool Calling & Function Layer

Design a deterministic tool layer so your LLM can call external APIs, run SQL queries, trigger automations, or look up live data — with fallback logic so it never gets stuck.

Anti-Hallucination & Grounded Generation

Implement citation-based outputs, context anchoring, and output validation so your app only answers what it actually knows. Built-in refusal behavior when context is insufficient.

Gradio / Streamlit App Deployment

Package your LLM app as a live, interactive web application with a clean UI. Deployed to Hugging Face Spaces or your own hosting for immediate stakeholder access.

Testing, Evaluation & Documentation

Run held-out evaluation sets to measure accuracy, citation rate, and refusal behavior. Full technical documentation included so your team can maintain and extend the app.

LangChain LiteLLM OpenRouter Hugging Face Gradio Streamlit Prompt Engineering LLM Developer AI DeveloperStarting at$500

Duration1 week

Tags

AI Agent Developer

AI Agent Engineer

AI Application Developer

AI Automation

AI Developer

AI Voice Developer

Low-Code/No-Code Developer

Prompt Engineer

Artificial Intelligence

Service provided by

Abrar Mohtasim Chattogram, Bangladesh

LLM Application DevelopmentAbrar Mohtasim

Starting at$500

Duration1 week

Tags

AI Agent Developer

AI Agent Engineer

AI Application Developer

AI Automation

AI Developer

AI Voice Developer

Low-Code/No-Code Developer

Prompt Engineer

Artificial Intelligence

I build production-ready LLM-powered applications — not just demos. From tool calling and prompt engineering to anti-hallucination systems and grounded generation, I design AI apps that behave reliably in real business environments and deliver live via Gradio, Streamlit, or API.

What's included:

LLM Integration & Prompt Engineering

Connect your app to leading LLMs (GPT-4, Claude, Mistral, Phi) via OpenRouter or native APIs. Includes system prompt design, few-shot examples, and output formatting for consistent, high-quality responses.

Tool Calling & Function Layer

Design a deterministic tool layer so your LLM can call external APIs, run SQL queries, trigger automations, or look up live data — with fallback logic so it never gets stuck.

Anti-Hallucination & Grounded Generation

Implement citation-based outputs, context anchoring, and output validation so your app only answers what it actually knows. Built-in refusal behavior when context is insufficient.

Gradio / Streamlit App Deployment

Package your LLM app as a live, interactive web application with a clean UI. Deployed to Hugging Face Spaces or your own hosting for immediate stakeholder access.

Testing, Evaluation & Documentation

Run held-out evaluation sets to measure accuracy, citation rate, and refusal behavior. Full technical documentation included so your team can maintain and extend the app.

LangChain LiteLLM OpenRouter Hugging Face Gradio Streamlit Prompt Engineering LLM Developer AI Developer$500