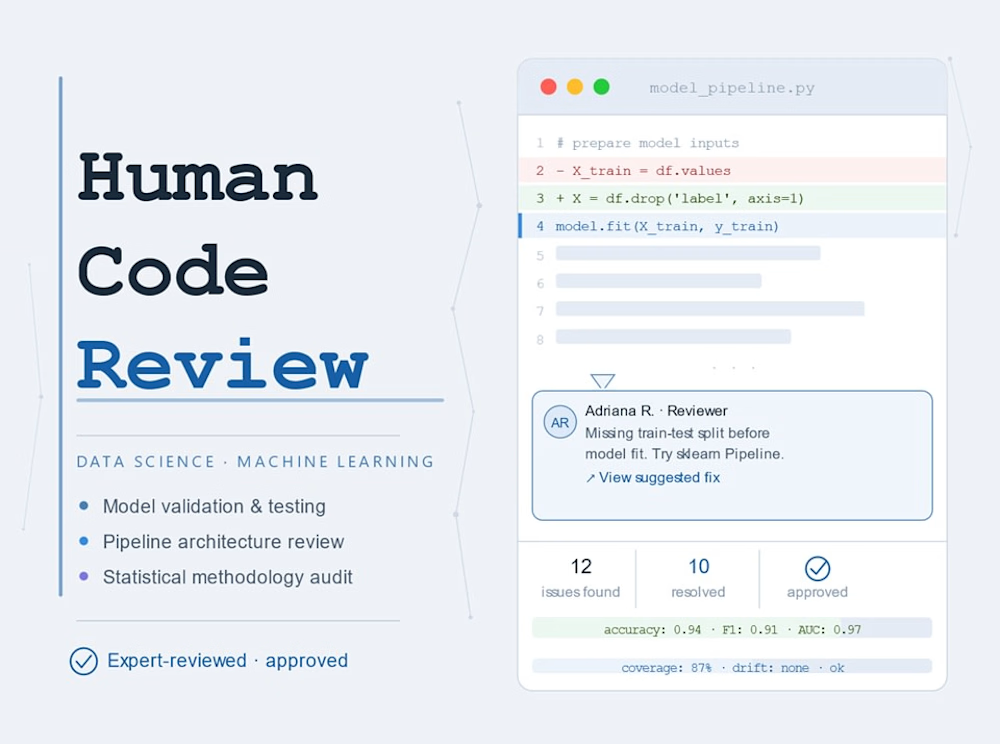

AI coding agents are fast. They're also great at accumulating redundant functions, ignoring pipeline integrity, leaking features in ways that look fine until they don't, and writing code that technically runs but nobody could maintain.

This is a human review, by a practitioner with 6 years of healthcare analytics experience, specifically for codebases where agents have been doing the building.

I look for what agents consistently miss: redundant or contradictory logic across files, silent data leakage, modeling assumptions that drifted from the original intent, metrics that don't match the business problem, and structural debt that compounds the longer you let it grow.

What's included:

Line-level comments via GitHub PR, Jupyter, or annotated file

A written summary of key risks, redundancies, and structural concerns

One follow-up Q&A exchange (async)

Good fit for:

Agent-assisted or Cursor/Copilot-heavy ML projects that need a sanity check

Predictive models (classification, regression, survival) before handoff or deployment

sklearn Pipelines, MLflow setups, feature engineering scripts

Healthcare or clinical data workflows

Research-grade analysis preparing for peer review

Deliverable: Written review returned within 3 business day

Get it for$149.99

Spot Check

$149.99

Up to 3 files / ~300 lines

Quick scan for agent-heavy codebases: redundant logic, obvious leakage, structural red flags. Good for smaller scripts or a single pipeline.

Tags

Docker

Python

R

Product created by

Adriana E. Reyes Constitución, Chile

Get it for$149.99

Spot Check

$149.99

Up to 3 files / ~300 lines

Quick scan for agent-heavy codebases: redundant logic, obvious leakage, structural red flags. Good for smaller scripts or a single pipeline.

Tags

Docker

Python

R

AI coding agents are fast. They're also great at accumulating redundant functions, ignoring pipeline integrity, leaking features in ways that look fine until they don't, and writing code that technically runs but nobody could maintain.

This is a human review, by a practitioner with 6 years of healthcare analytics experience, specifically for codebases where agents have been doing the building.

I look for what agents consistently miss: redundant or contradictory logic across files, silent data leakage, modeling assumptions that drifted from the original intent, metrics that don't match the business problem, and structural debt that compounds the longer you let it grow.

What's included:

Line-level comments via GitHub PR, Jupyter, or annotated file

A written summary of key risks, redundancies, and structural concerns

One follow-up Q&A exchange (async)

Good fit for:

Agent-assisted or Cursor/Copilot-heavy ML projects that need a sanity check

Predictive models (classification, regression, survival) before handoff or deployment

sklearn Pipelines, MLflow setups, feature engineering scripts

Healthcare or clinical data workflows

Research-grade analysis preparing for peer review

Deliverable: Written review returned within 3 business day

$149.99